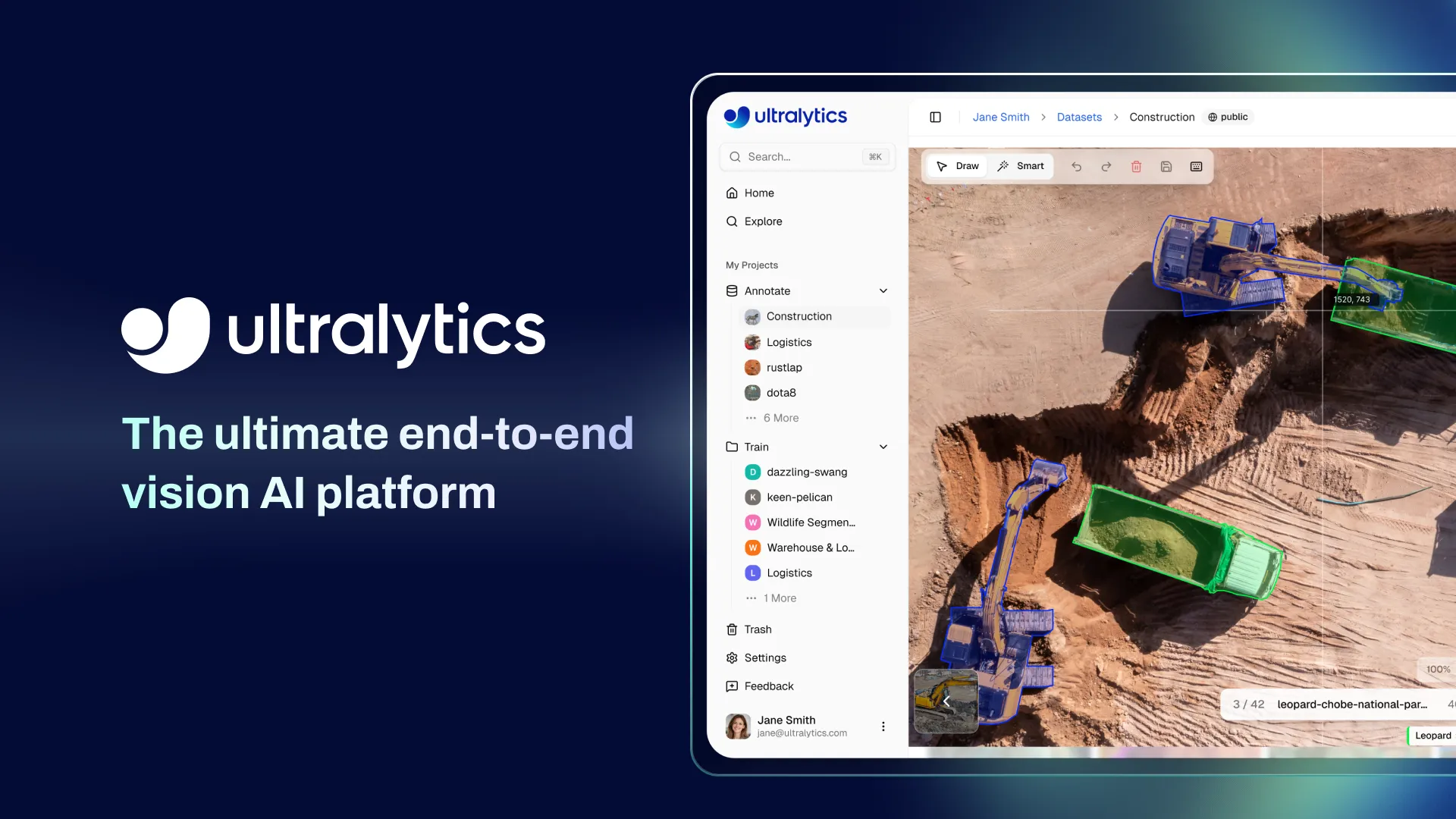

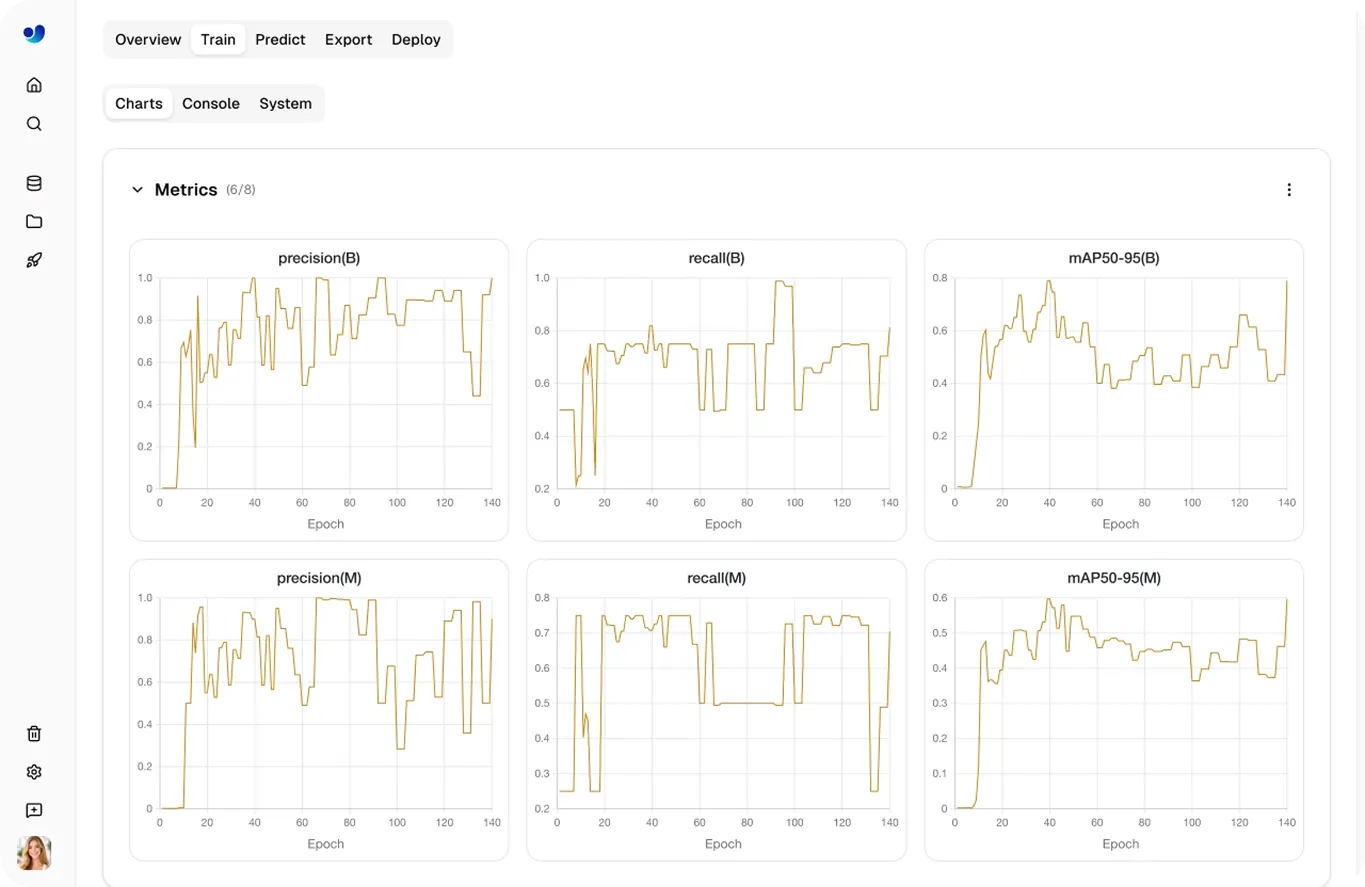

Introducing Ultralytics Platform: The smartest way to annotate, train, and deploy vision AI

Mar 19, 2026

Annotate, train, and deploy production-ready computer vision models in one end-to-end workspace built for teams shipping real-world vision AI.

Mar 19, 2026

Annotate, train, and deploy production-ready computer vision models in one end-to-end workspace built for teams shipping real-world vision AI.

We built the Ultralytics open-source ecosystem to make computer vision accessible to everyone. Millions of developers worldwide now train Ultralytics YOLO models to power everything from factory inspection lines to autonomous delivery systems.

But over the years, we kept hearing the same feedback from the community: training a strong model is no longer the biggest barrier in computer vision. Getting it into production is.

Today, we're changing that. Meet Ultralytics Platform: the ultimate end-to-end platform purpose-built to take your vision AI from raw data to real-world production-grade deployment.

Over the past decade, computer vision and deep learning have rapidly evolved from research into critical infrastructure powering real-world systems. It powers quality inspection on manufacturing floors, enables cashierless retail, guides surgical robotics, and keeps autonomous vehicles on course. The models have never been more capable, but the journey from a working prototype to a reliable production system? That's still harder than it should be.

Most teams today stitch together separate tools for annotation, training, experiment tracking, deployment, and monitoring. Each integration adds complexity. Each handoff slows momentum. And weeks can quietly disappear, managing infrastructure instead of building the application itself.

As we worked closely with developers, startups, and enterprise teams across the computer vision community, three challenges kept surfacing:

These recurring challenges are the defining bottleneck of modern computer vision development and what ultimately led us to build the Ultralytics Platform. Simplifying the workflow from data preparation to deployment and connecting the key stages of computer vision development makes it possible for teams to move more easily from promising models to real-world vision AI systems.

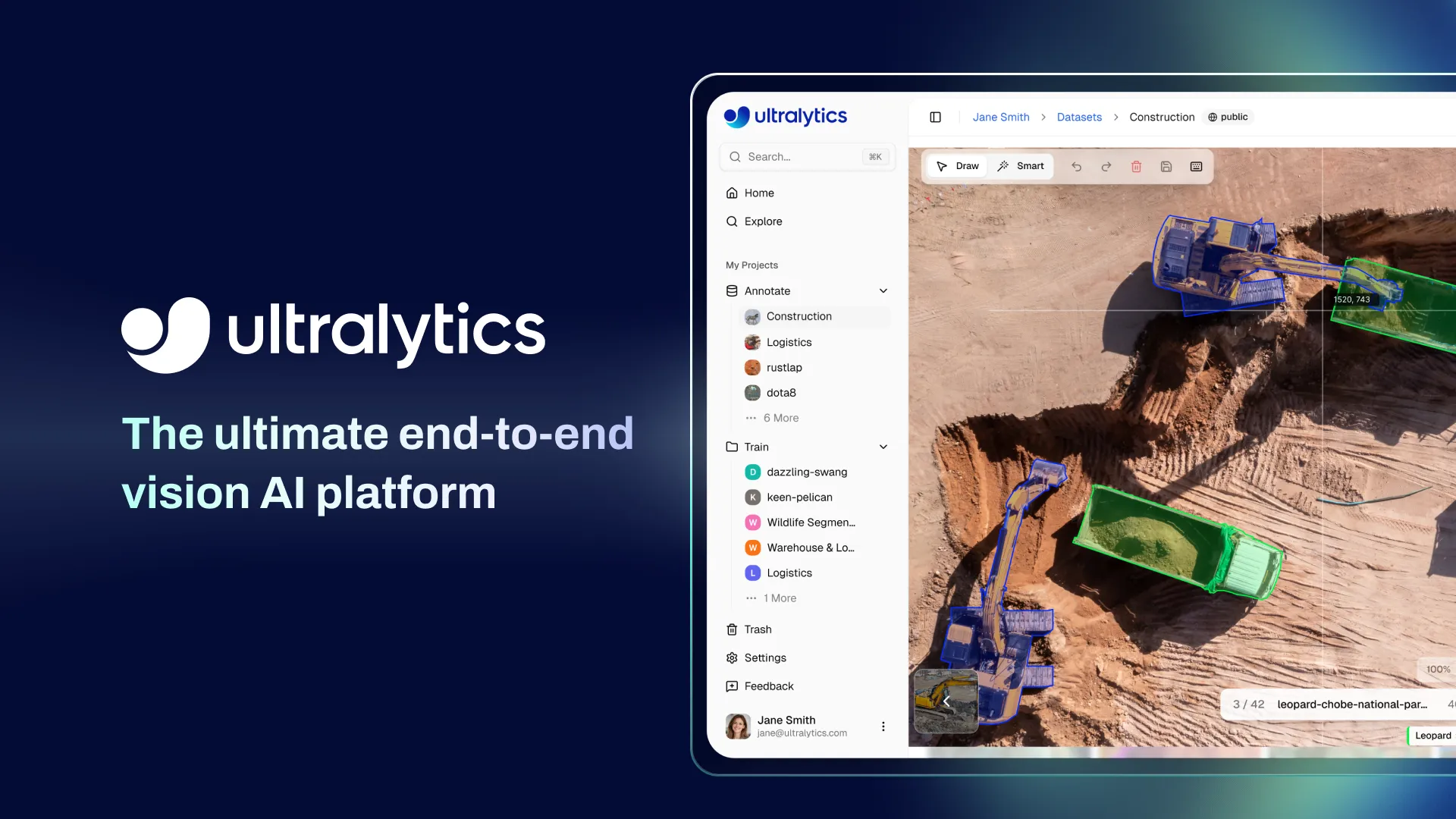

Ultralytics Platform brings together every stage of the computer vision workflow from data management to annotation, model training, deployment, and monitoring. All in a single, connected workspace to reduce complexity and accelerate the path from idea to impact.

Upload your images or videos. Label them with built-in annotation tools. Train models like Ultralytics YOLO26 directly on the platform. Deploy globally. Monitor performance in real time. Every stage flows into the next, so you can focus on building your application instead of managing infrastructure.

Turning a computer vision idea into a working system involves several stages, from preparing data to running models in production. Ultralytics Platform organizes this process into a clear, simple pipeline that helps you move from an initial concept to a deployed model with ease.

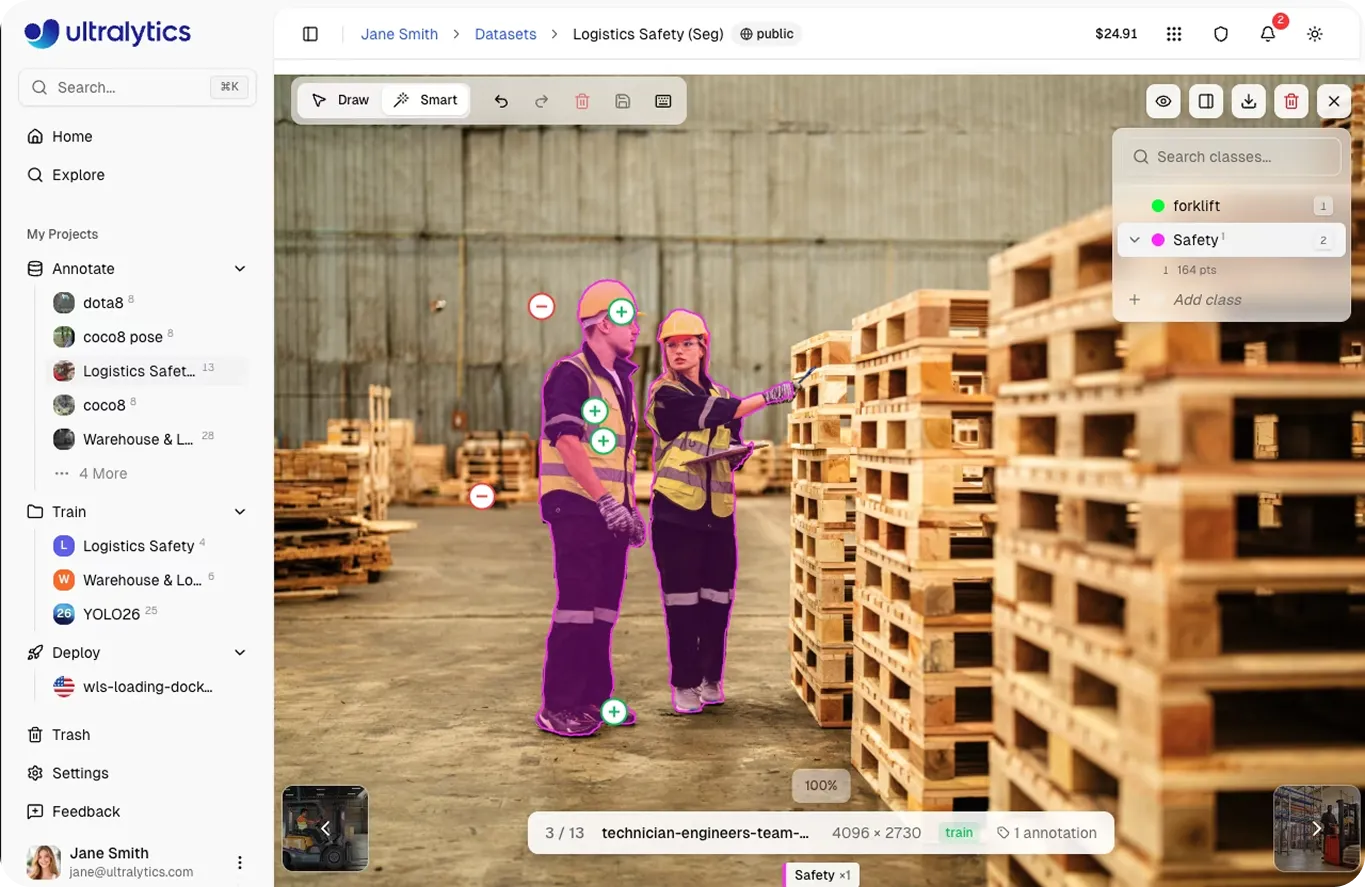

Labeling data has traditionally been one of the most time-consuming parts of any computer vision project. Ultralytics Platform makes it significantly faster and is designed to meet you wherever your data lives.

You can upload raw images, videos, or dataset archives, import datasets already labeled in YOLO or COCO format, or clone public datasets shared by the Ultralytics community. Whether you're starting from scratch or building on existing work, your data is ready to use the moment it lands on the platform.

If your images or videos aren't labeled yet, the built-in annotation editor makes it significantly faster to get them there. It supports every major computer vision task, from object detection and instance segmentation to pose estimation, oriented bounding box (OBB) detection, and image classification, with tools designed for both speed and precision.

The standout capability here is SAM 3-powered smart annotation. Using the Segment Anything Model 3 (SAM 3), you can generate precise masks, bounding boxes, or oriented boxes by clicking on an object and refining with a few points. What used to take hours of manual tracing now takes minutes, giving teams the ability to build high-quality datasets at a pace that matches their development speed.

Pose skeleton templates, keyboard shortcuts, inline class management, and undo/redo support round out an annotation experience built to keep you in flow.

Once your data is labeled, training is just a click away. Ultralytics YOLO26, YOLO11, and the full family of Ultralytics YOLO models are natively supported and can be trained directly on the platform using cloud graphics processing units (GPUs) or trained on local hardware while streaming metrics back to the platform.

Choose from a wide range of cloud GPU options, including the RTX 4090, RTX PRO 6000, NVIDIA A100, H100, and more, or train on your own local hardware while streaming real-time metrics back to the platform. Every experiment is automatically organized into projects that group related models together, making it straightforward to track how different datasets, parameters, and configurations influence results and to identify the strongest models.

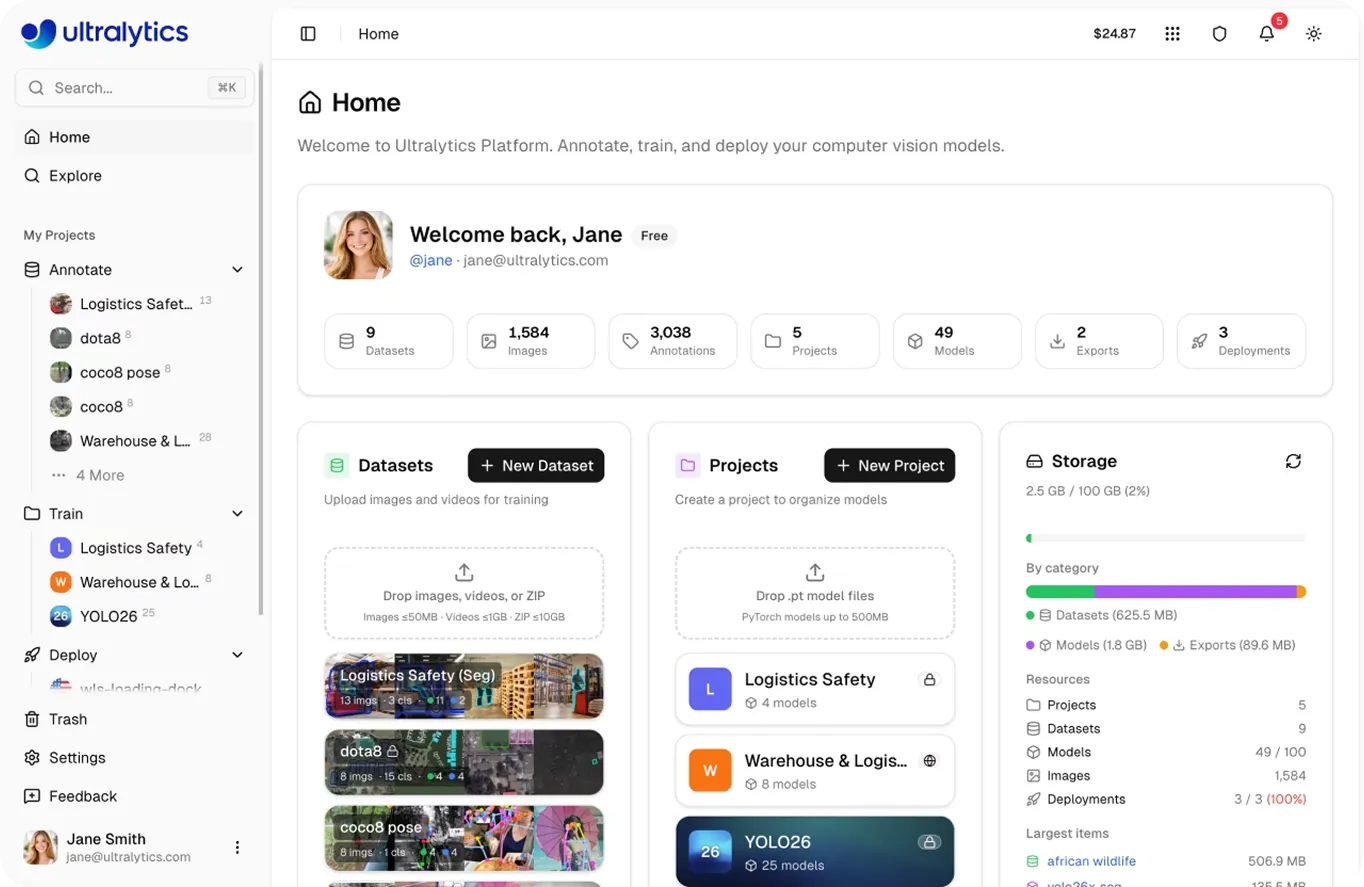

Monitor loss curves, precision, recall, and mean average precision (mAP) as they evolve epoch by epoch. Dive into confusion matrices and precision-recall curves to understand exactly where your model performs well and where it can improve. Compare multiple runs side by side to find the configuration that delivers the best results.

Ultralytics Platform also manages key stages of the training lifecycle automatically. Checkpoints are saved throughout training, preserving both the best-performing model and the final trained weights. Pretrained models can be fine-tuned directly within the platform, and trained models can be uploaded or downloaded for use in other environments, giving teams full flexibility over how and where they work.

No infrastructure to provision. No separate experiment tracking service to set up. Just a clear, efficient path from labeled data to a trained model ready for the real world.

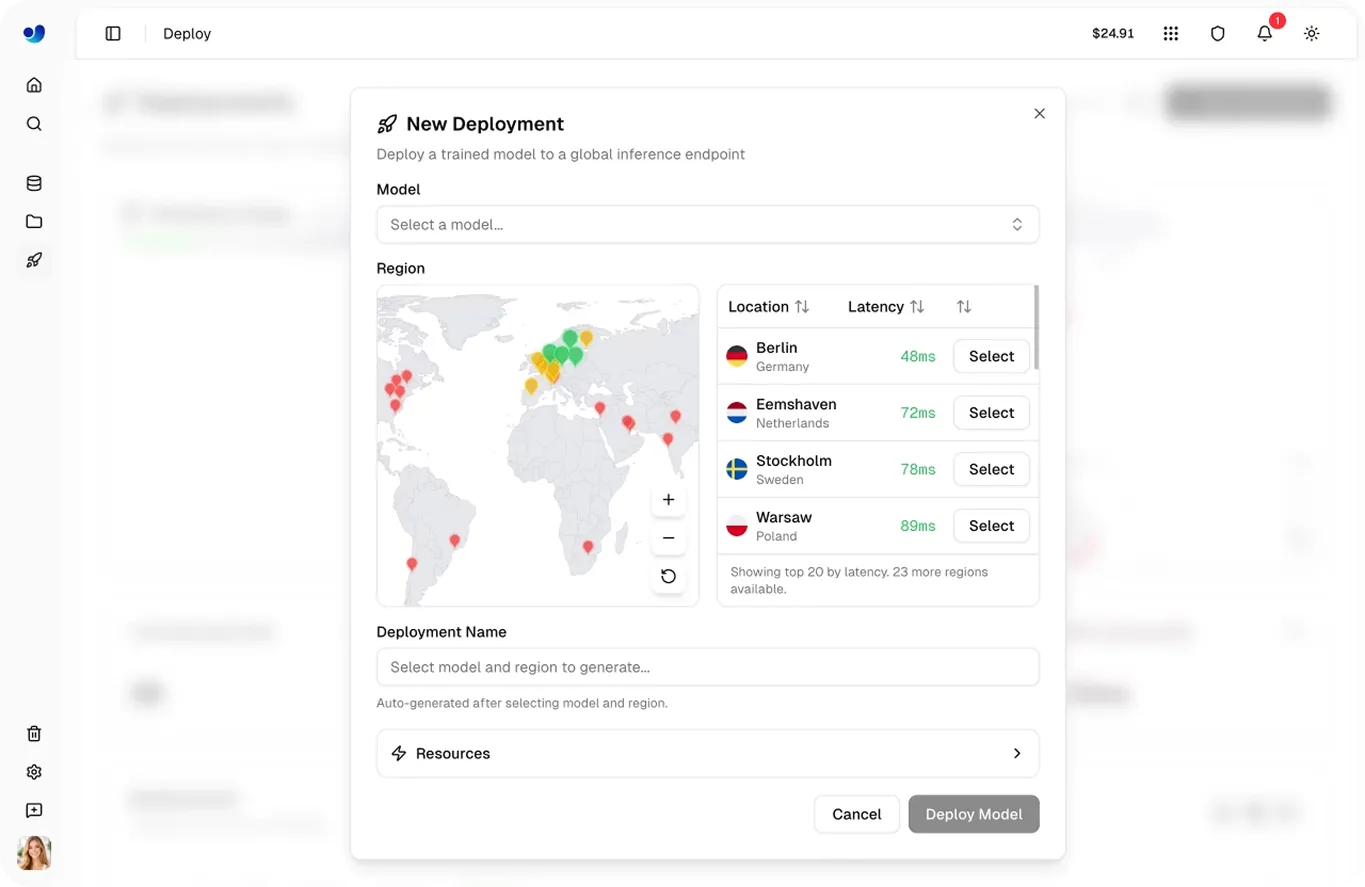

A well-trained model needs an equally capable path to production. Ultralytics Platform delivers that.

Start by validating your model's inference results directly in the browser. When you're confident in the results, deploy to 43 global regions with dedicated endpoints that auto-scale to match demand, each with a unique API endpoint ready for integration into your applications.

Whether you need to deploy in the cloud or run models on edge devices, the Ultralytics Platform provides flexible options designed for both scenarios. All Ultralytics YOLO models are natively optimized to run efficiently across environments, delivering reliable performance even on edge hardware with limited compute resources. For teams that need to run models outside the platform, Ultralytics supports export to 17 validated formats, including ONNX, TensorRT, CoreML, TFLite, and OpenVINO, so your models run natively across cloud services, mobile devices, edge systems, and more.

Once your models are live, built-in monitoring in the deployments dashboard gives you full visibility into production performance: request volume, latency metrics, error rates, endpoint health, and detailed logs. You can also review logs, check endpoint health status, and track performance over time to help ensure your computer vision systems run reliably in production and identify opportunities to optimize performance.

Get started today, or explore the Ultralytics docs for a deeper look at what the platform can do.

As you learn more about Ultralytics Platform, you’ll quickly see that its goal goes beyond providing tools for building computer vision systems. At its core, the platform is designed to help make vision AI development more accessible and user-friendly to a wider community.

Historically, building and deploying AI systems required specialized infrastructure, complex tooling, and significant upfront investment. Even when powerful models became easier to train, the surrounding workflow - managing datasets, running experiments, deploying models, and maintaining infrastructure - was tricky for individuals and smaller teams to access.

Ultralytics Platform lowers these barriers by bringing the entire vision AI workflow into a single environment, whilst making it easy to get started. New users can begin experimenting with the platform through the free plan, which includes signup credits for cloud training and access to core features such as dataset management, annotation tools, model training, and model export.

As projects grow, users or enterprise clients can scale with additional credits and platform plans that unlock more compute resources, storage, collaboration features, and deployment capacity. This flexible approach means developers, researchers, startups, and enterprises can start small, experiment freely, and expand their usage as their computer vision systems move toward production.

By combining an end-to-end computer vision workflow with an accessible pricing model, the Ultralytics Platform helps open the door for more people to build, test, and deploy real-world vision AI applications.

Ultralytics Platform brings the entire vision AI lifecycle into one powerful workspace, making it faster to go from raw data to production-ready vision AI systems. With built-in tools for annotation, training, deployment, and monitoring, teams can build and deploy models such as Ultralytics YOLO26, Ultralytics YOLO11, Ultralytics YOLOv8, and Ultralytics YOLOv5 without managing complex infrastructure.

Whether you're experimenting with your first model or deploying vision AI at scale, the platform is designed to support every stage of the journey.

Join our community and discover innovations such as AI in manufacturing and vision AI in retail. Visit our GitHub repository and get started with computer vision today by checking out our licensing options.

Begin your journey with the future of machine learning