Dedicated inference endpoints vs shared inference for deployment

Explore when to choose dedicated inference endpoints on the Ultralytics Platform for scalable, low-latency vision AI deployment over shared inference.

Explore when to choose dedicated inference endpoints on the Ultralytics Platform for scalable, low-latency vision AI deployment over shared inference.

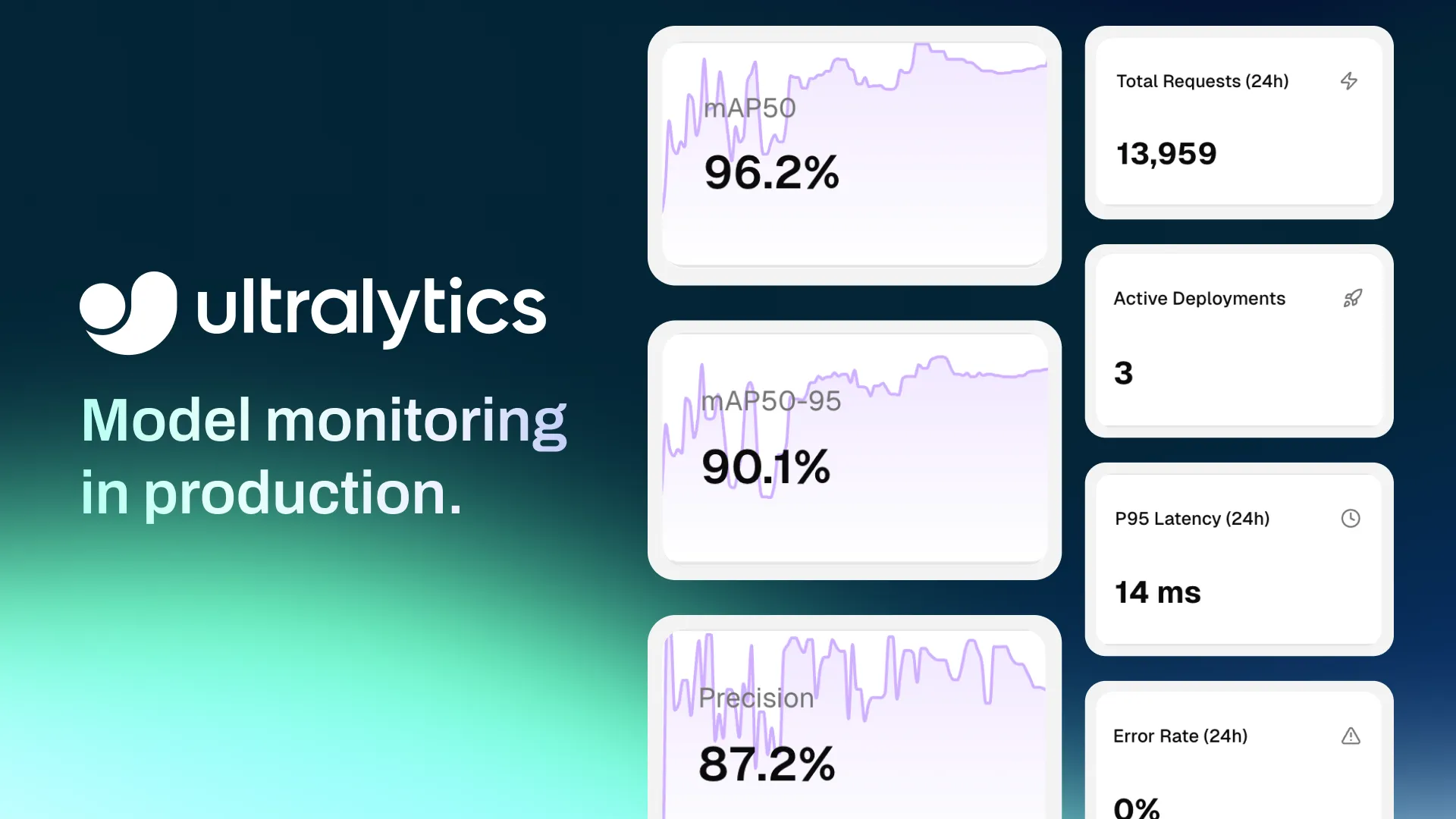

Recently, we introduced the Ultralytics Platform, an end-to-end solution that brings the entire computer vision workflow into one place, from dataset preparation and model training to inference, deployment, and monitoring.

Built based on feedback from the computer vision community, the platform is designed to simplify each stage of development by providing integrated features that support the full lifecycle of vision AI applications.

For instance, once a model is trained, the next step is to deploy it so it can be used to run inference and make predictions in real-world applications. The platform makes this process straightforward by offering multiple deployment options.

You can export models to run them in your own environment, use shared inference for quick testing, or deploy dedicated endpoints for scalable, production-ready applications. Each of these deployment options allows you to run AI inference, but they are designed for different stages and use cases.

Model export gives you full control to run models in your own infrastructure, shared inference makes it simple to test and experiment without setup, and dedicated endpoints are built for reliable, large-scale production workloads.

At first glance, shared inference and dedicated endpoints may seem quite similar. Both enable you to send API requests to your model and receive structured predictions, making it easy to integrate vision AI into applications.

However, as your workloads grow and your computer vision applications begin handling real-time inference requests, the differences between these options become more important. In this article, we’ll take a closer look at shared inference and dedicated endpoints, how they compare, when to use each, and why dedicated endpoints become the better choice as your applications scale.

Shared inference is a simple way to run AI inference on your models without setting up any infrastructure or worrying about GPU types, framework integration, or runtime configuration. Once your model is trained or fine-tuned, you can use it to make predictions directly through the platform.

In this setup, your model runs on shared, multi-tenant compute resources across a few core regions, such as the US, Europe, and Asia-Pacific. Requests are automatically routed to available services, so you don’t need to configure GPU instances or runtime environments. Everything is handled for you, making it easy to get started.

When you use shared inference, you send requests to your model through a REST API using tools like Python or CLI, and receive structured JSON outputs, such as detected objects, confidence scores, and other prediction details. This makes it seamless to test models and integrate them into applications.

Because the system is shared, it is designed for development, testing, and light usage. It works well for validating predictions and building early integrations. At the same time, performance may vary depending on system load, and usage is rate-limited to 20 requests per minute per API key, making it less suitable for high-throughput production workloads.

Overall, shared inference is best suited for early-stage development, where the focus is on understanding and improving your model before moving to larger-scale applications.

Dedicated endpoints are single-tenant inference services where your vision AI models run on isolated compute resources. Instead of sharing infrastructure, each endpoint has its own runtime with configurable resources such as CPU and memory, giving you more control over performance.

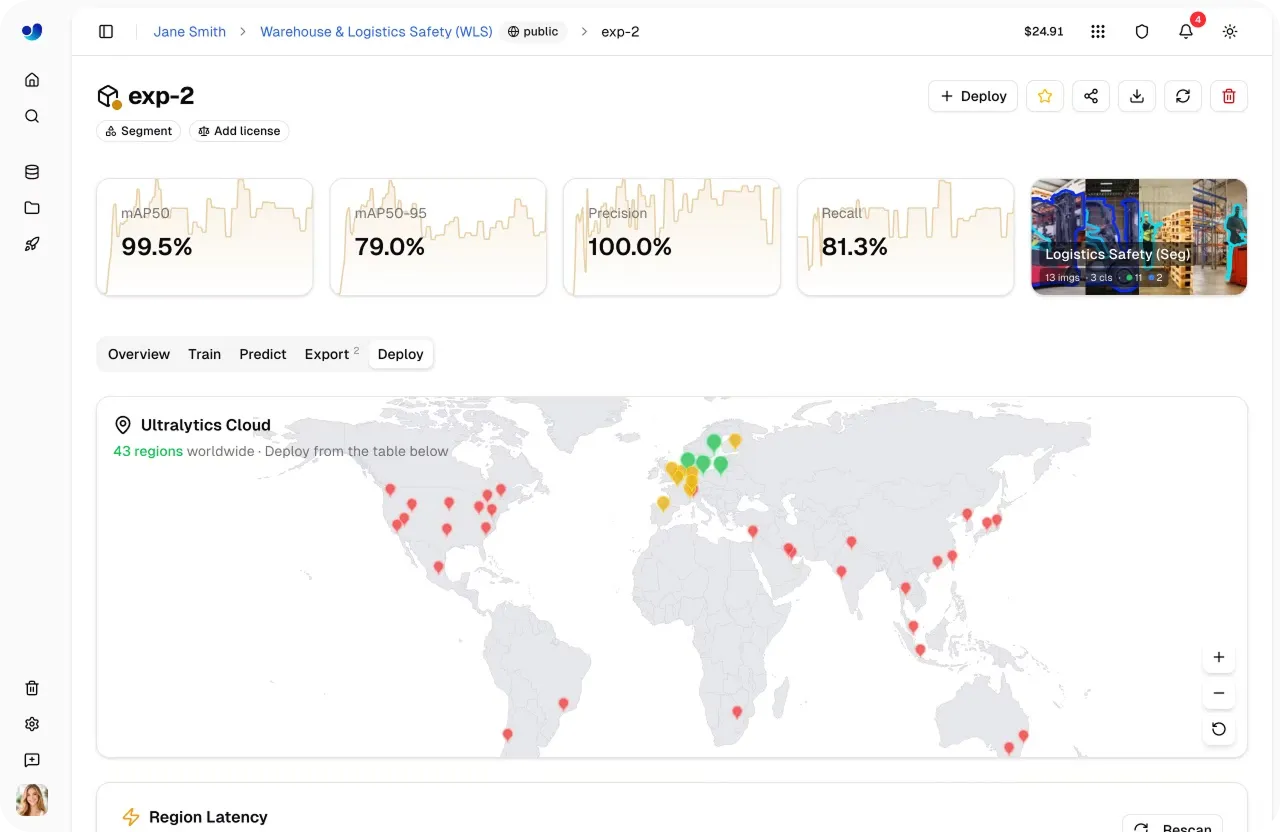

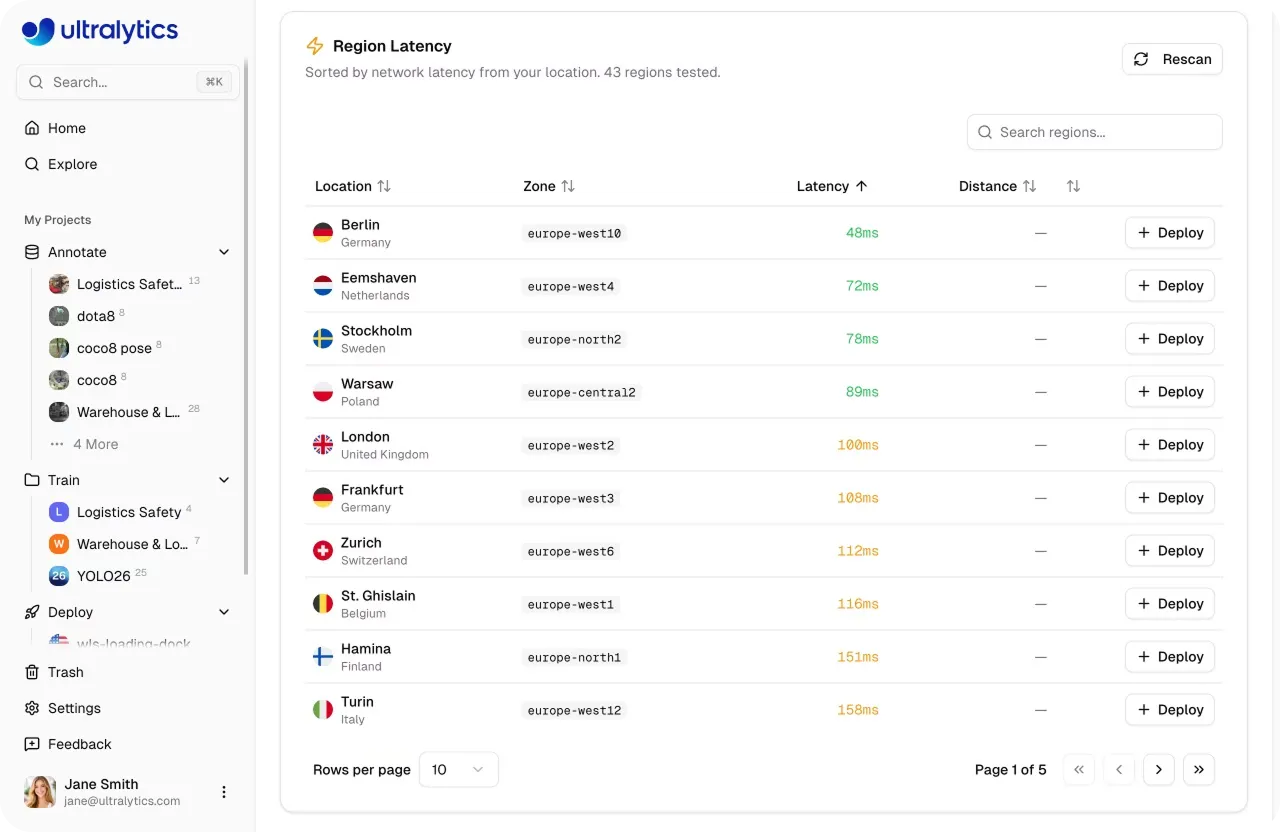

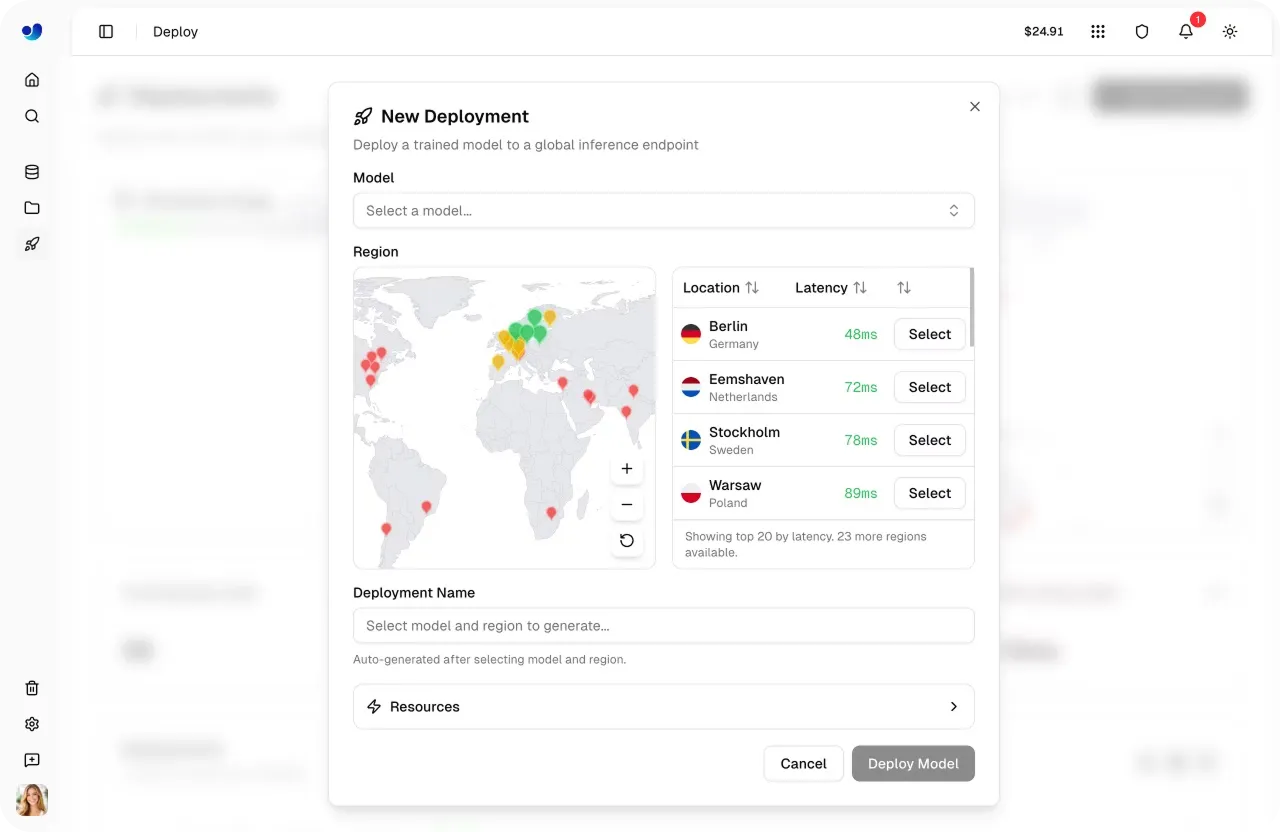

When you deploy a model as a dedicated endpoint, it is assigned a unique API URL and uses your API key for authentication, making it easy to integrate into applications. These endpoints can be deployed across 43 global regions, allowing you to run inference closer to your users and reduce latency.

One of the key advantages is autoscaling. Endpoints automatically adjust based on incoming requests, scaling up to handle higher traffic and scaling down when demand drops. With scale-to-zero enabled by default, endpoints can shut down when idle and restart when needed, helping optimize resource usage.

In other words, dedicated endpoints are designed for production workloads. They provide consistent low latency, higher throughput, and greater reliability compared to shared inference.

Also, dedicated endpoints don’t have rate limits. Requests go directly to your endpoint, so how much traffic you can handle depends on your setup and scaling rather than fixed limits.

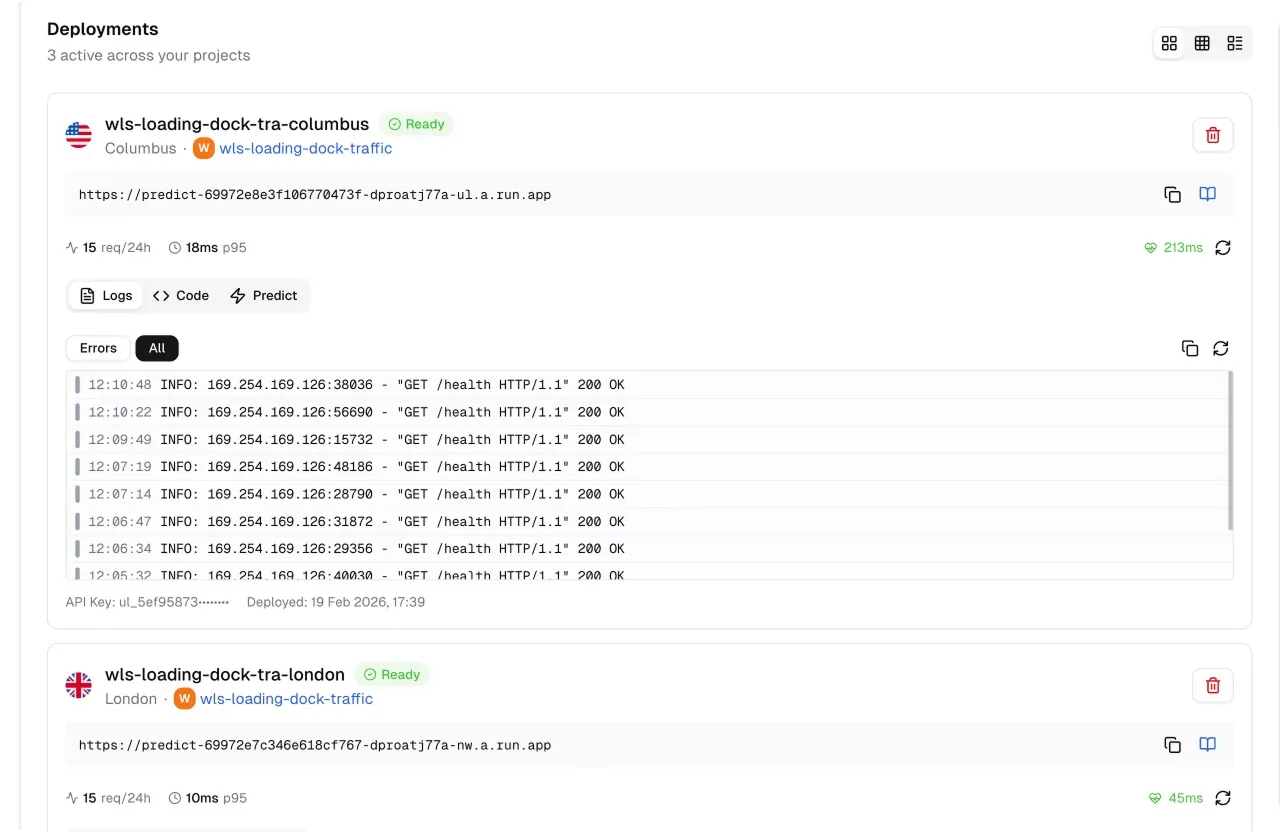

In addition to this, built-in monitoring, logs, health checks, and predictable runtime and startup behavior make it straightforward to track performance and maintain stable deployments across all plans. On the Free plan, cold starts typically take between 5 and 45 seconds, while Pro plan endpoints stay warm, resulting in faster and more predictable inference performance.

Simply put, dedicated endpoints are ideal for real-time vision AI applications that require reliable, scalable, and high-performance inference.

Here’s a closer look at how shared inference and dedicated endpoints compare:

As AI and machine learning applications move from testing to real-world use, performance, scalability, and reliability become essential. That’s why dedicated endpoints offer clear advantages over shared inference.

With dedicated endpoints, your pre-trained or custom model runs on its own compute resources, so performance isn’t affected by other users. This helps keep latency low and consistent, which is important for real-time applications like video analytics and monitoring systems.

For example, think of a retail analytics system processing live camera feeds across multiple stores. By deploying endpoints across 43 global regions, inference can run closer to each store, reducing latency and improving response times.

With shared inference, where resources are shared and regions are limited, performance can vary during busy periods.

Dedicated endpoints can also handle higher traffic and automatically scale based on demand. With built-in monitoring, logs, and health checks, they provide more predictable performance, making them a good fit for large-scale and continuous AI workloads.

As you explore the differences between shared inference and dedicated endpoints, you might be wondering where shared inference fits into the overall computer vision workflow.

Let’s take a look at the retail analytics example again. Before deploying a vision solution across multiple stores, teams typically need to test how it performs on real data and refine it based on those results.

Shared inference makes this process simple by letting you send sample images or video frames from store cameras and quickly review predictions without setting up infrastructure. This is especially useful for testing model behavior, debugging incorrect predictions, and validating results under different conditions, such as changes in lighting or store layouts.

By iterating in this way, teams can improve model accuracy and reliability before moving to production. Once the model performs well in these testing scenarios, it can then be deployed to dedicated endpoints for real-time use across multiple locations.

Shared inference can also work well for applications with low or infrequent usage. For instance, a small retail store might use it to occasionally analyze foot traffic or review customer activity at specific times, without needing a fully scaled deployment. In these cases, it provides a simple and cost-effective way to run inference on demand.

As AI applications move beyond testing, the choice of deployment starts to directly impact performance, scalability, and user experience. Dedicated endpoints can be widely used across industries because they provide stable performance, low latency, and the ability to handle large-scale workloads.

Here are some common use cases that show how dedicated endpoints can be used in real-world applications:

One of the key benefits of the Ultralytics Platform is how simple it is to move from shared inference to dedicated endpoints as your application grows. Instead of switching tools or rebuilding your setup, you can transition to a production-ready deployment within the same environment.

After testing your model with shared inference, moving to a dedicated endpoint is a straightforward next step. You can deploy the same model to an endpoint, choose your preferred region and compute resources, and update the endpoint URL in your application. The overall integration remains similar, so there’s little to no change in how you send requests or handle responses.

This means you can scale from testing to production with a few clicks. As your workload increases or your application requires more consistent performance, you can move to dedicated endpoints without disrupting your existing workflow.

To learn more about deploying models using dedicated endpoints on the Ultralytics Platform, check out the official Ultralytics Platform docs.

Shared inference is a great starting point for testing and experimentation, but production workloads demand more consistency and scale. As applications grow, dedicated endpoints provide the performance and reliability needed to support real-world use. This makes them the best choice for most production deployments.

Join our community and explore our GitHub repository to learn more about computer vision models. Read about applications like AI in agriculture and computer vision in robotics on our solutions pages. Check out our licensing options and get started with vision AI.

Begin your journey with the future of machine learning