Learn how Ultralytics YOLO26 can be used to detect unsafe pallet stacking in warehouses, helping improve safety, reduce risks, and maintain efficient operations.

Learn how Ultralytics YOLO26 can be used to detect unsafe pallet stacking in warehouses, helping improve safety, reduce risks, and maintain efficient operations.

Safety is critical when it comes to warehouse operations. Unstable pallets, falling loads, and blocked aisles can lead to product damage, workflow disruptions, and serious worker injuries.

In particular, pallet stacking plays a key role in maintaining a safe and efficient warehouse. It directly affects how stable loads are, how easily materials move through the space, and how safely workers can operate.

Even small inconsistencies can create larger risks. A slight tilt, uneven weight distribution, or a loosely secured load can make pallets unstable. Missing shrink wrap or poor alignment can further weaken stability, increasing the likelihood of product damage or workplace accidents.

To prevent such issues, organizations such as the Occupational Safety and Health Administration (OSHA) provide guidelines for safe material storage and handling. These safety guidelines emphasize maintaining load stability, staying within safe load limits, and following proper handling practices to prevent hazards like falling or collapsing stacks.

However, applying these standards consistently across fast-moving warehouse environments isn’t always easy. Pallets are typically moved, restacked, and handled throughout the day. This makes it difficult to monitor every load condition in real time or catch early signs of instability.

A more effective approach is to use computer vision. As a branch of AI, it enables machines to interpret and analyze visual data from images and video feeds. With vision AI models like Ultralytics YOLO26, warehouses can monitor pallet conditions in real time and detect unstable configurations early, allowing teams to respond before issues escalate.

In this article, we’ll explore the risks associated with unsafe pallet stacking and how vision-based systems can help detect and prevent them. Let’s get started!

Pallets are designed to carry a certain amount of weight and be stacked in a stable way. When they’re overloaded or not balanced properly, that stability starts to break down.

Even small misalignments during stacking can build up over time and increase the chances of a load failing during handling. These issues usually happen in fast-paced environments where pallets are constantly being loaded, moved, and restacked. What seems like minor mistakes at first can gradually affect weight distribution and lead to unstable stacks.

This also affects day-to-day operations. If a pallet needs to be fixed during loading or transport, it can slow things down and cause delays. The problem becomes more noticeable during handling, especially when forklifts and pallet jacks are involved.

Since such equipment is always in motion, dealing with unstable loads makes even routine tasks riskier. This can lead to damaged goods, disruptions in workflow, or overloading.

In more serious cases, it can result in worker injuries and impact the wider supply chain, increasing both operational and financial costs.

Most warehouses rely on manual pallet inspection processes, often guided by OSHA standards, safety regulations, and inspection checklists. These methods support pallet safety and proper stacking practices, but they are limited in terms of how consistently they can be applied across busy environments.

One key limitation is that inspections only capture a moment in time. Warehouse operations involve continuous loading, movement, and restacking, but inspections capture only what the stack looks like at the time of the check. This makes it difficult to detect issues that develop between checks, such as gradual misalignment, shifting loads, or early signs of instability.

Some problems are also harder to spot during routine checks. Damaged pallets, broken boards, or small splinters may go unnoticed, even though they can weaken the structure and affect load stability during handling.

Scale adds another layer of difficulty. In large warehouses, it’s challenging to maintain regular inspections across all areas, especially around pallet racking and conveyor zones. These gaps in coverage make it harder to consistently follow safety practices and ensure stable pallet stacking across operations.

Warehouses are starting to adopt computer vision systems that can monitor day-to-day operations. These systems learn from large volumes of labeled images and can continuously track pallet-specific details across different storage areas.

For instance, cutting-edge computer vision models like YOLO26 support vision tasks such as object detection, image classification, oriented bounding box (OBB) detection, pose estimation, and instance segmentation, which can help analyze how pallets and loads are arranged across warehouse spaces.

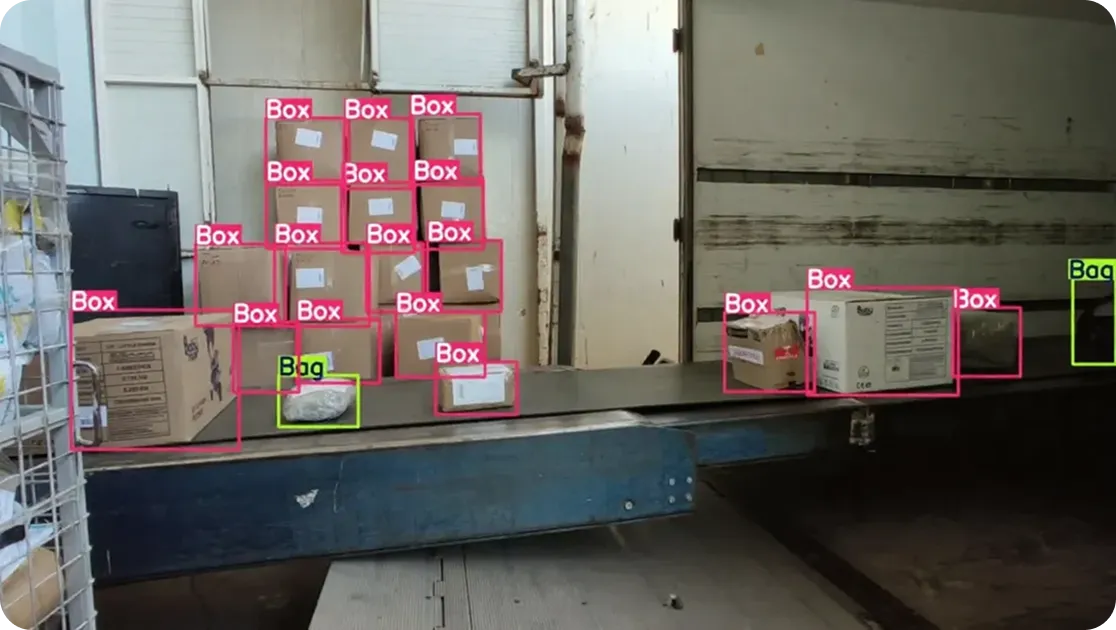

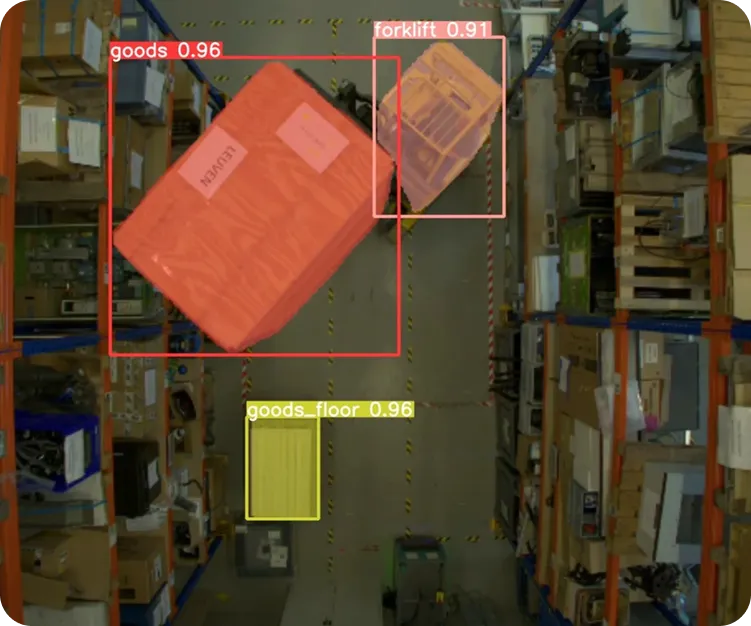

Specifically, object detection can be used to identify and locate pallets, boxes, and handling equipment across aisles and storage zones. This enables systems to keep track of how materials are placed and moved.

Meanwhile, instance segmentation enables precise identification of individual items within a stack by outlining each object at the pixel level. This makes it easier to separate overlapping or closely packed items. In situations where alignment is critical, oriented bounding boxes can be used to assess how loads are positioned, capturing their angles and orientations more accurately.

Similarly, image classification can be used to analyze the overall condition of a pallet or scene and assign labels such as “stable,” “unstable,” or “damaged.” Also, pose estimation focuses on detecting keypoints to track the position and movement of workers or equipment, making it possible to understand how they interact with pallets and identify potentially unsafe handling.

Out-of-the-box Ultralytics YOLO26 is available as a pre-trained model. In other words, it has already been trained on large datasets, so it can recognize common objects without needing to be built from scratch.

However, warehouse environments introduce their own nuances like varying pallet types, stacking patterns, load conditions, and real-world inconsistencies. This is where the ability to custom-train Ultralytics YOLO models like YOLO26 becomes valuable.

Training a model on warehouse-specific data enables it to better understand these variations and deliver more accurate and reliable results. This process starts with collecting images and video frames from warehouse floors, capturing different stacking conditions across environments.

These images are then annotated (labels are added), for example, by drawing bounding boxes (rectangular boxes) around pallets or marking areas of instability. Once a dataset is prepared using the annotated data, YOLO26 can be trained on these real-world examples, adapting it to variations in layout, lighting, and operations.

Training can be done either using the Ultralytics Python package, which provides built-in tools to load data, train models, and run predictions using code, or through Ultralytics Platform, an end-to-end computer vision platform that brings together data management, annotation, training, and deployment in one place.

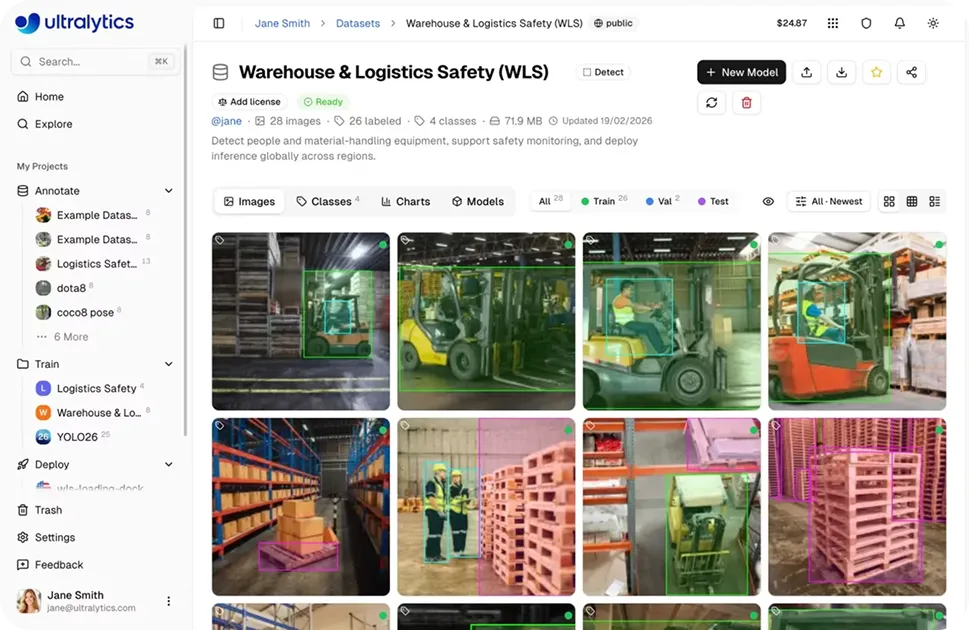

Managing computer vision workflows, from dataset preparation and annotation to training, evaluation, and deployment, can be complex. Ultralytics Platform tackles this challenge by bringing these steps into a single environment.

For example, users can organize and label image data from warehouse environments and use it to train models on real-world scenarios. This enables models to learn how pallets appear across different layouts, lighting conditions, and stacking styles, making it more accurate and reliable in real operations.

Once trained, models can be tested on new, unseen images using the built-in Predict tab to verify performance before deployment.

After validation, models can be deployed in different ways through Ultralytics Platform, depending on the use case, including shared inference for development and testing, dedicated endpoints for production deployments, or by exporting models to run on external systems or edge devices.

When you are building a vision-driven pallet monitoring system, camera placement can directly affect how reliably stacking issues are captured. The right setup supports more effective automation of monitoring systems.

Here are a few practical considerations for camera placement:

Next, let’s walk through some practical examples of how vision AI is used in warehouses to spot and handle common pallet stacking issues.

Stacking height limits define how high pallet stacks can be built safely, especially in storage areas where pallets are stacked close together to make the most of available space. These limits help prevent unstable loads and maintain safe clearance around pallet racks and overhead systems such as sprinklers.

However, these limits can be exceeded during busy periods like high-volume inbound operations. To keep a closer eye on such activity, models like YOLO26 can analyze camera feeds to detect and count individual pallets and track how the stack grows over time.

By monitoring the number and position of detected pallets, a vision-enabled system can estimate the overall stack height and identify when it approaches or exceeds safe limits. This gives warehouse operators early visibility into potential issues, making it possible for them to adjust stacking or redistribute loads before they become a safety risk.

When a pallet is stacked to the right height but not balanced properly, it can still become unstable. Uneven weight distribution, loosely placed boxes, or slight misalignment can cause a loaded pallet to gradually lean over time.

These changes are often subtle at first and may not be obvious during routine checks. But, with computer vision models like YOLO26, these checks can be done continuously using camera feeds.

For example, YOLO26’s support for oriented bounding boxes (OBBs) makes it easy to capture the angle and orientation of each pallet or box, rather than just their position. By tracking these orientations over time, the model can detect small shifts such as slight tilts or changes in alignment.

As these angles begin to drift away from vertical alignment or become inconsistent across layers, it can indicate that a stack is starting to lean. When imbalances are detected early, they can be corrected before they escalate.

Here are some of the key benefits of using vision-based systems for pallet stacking:

While using vision AI for pallet stacking offers many advantages, here are some limiting factors to keep in mind:

Unsafe pallet stacking usually doesn’t become a problem immediately. It builds over time through small misalignments and shifting loads. With continuous visual monitoring, these subtle changes can be caught early, making it easier to act before issues escalate. Models like YOLO26 support this by enabling fast, real-time detection.

Want to explore vision AI further? Visit our GitHub repository to learn more. Join our community and explore applications like AI in logistics and vision AI in retail. Check out our licensing options to get started!

Begin your journey with the future of machine learning