Ultralytics YOLO26 vs YOLO11 vs YOLOv8: Which one should you use?

Explore Ultralytics YOLO26 vs Ultralytics YOLO11 vs Ultralytics YOLOv8 and discover which computer vision model you should choose for your projects.

Cutting-edge computer vision systems, often powered by convolutional neural networks (CNNs), enable machines to analyze and interpret visual data from images and videos, and are now being deployed across a wide range of environments.

From agriculture to manufacturing and retail, these systems operate across a range of deployment environments, including edge devices, embedded hardware, Internet of Things (IoT) devices, on-device processing, and large-scale cloud pipelines that support real-time applications.

In real-world use, deploying these models isn't always straightforward. They often need to run with limited compute, meet strict latency requirements, and scale without significantly increasing costs. These constraints make performance a multi-dimensional problem rather than being about accuracy alone.

While accuracy is still important, it is equally important for a model to run efficiently in production. Factors like speed, resource usage, and scalability play a big role in how well a system performs over time.

Computer vision models like Ultralytics YOLO models have evolved with this balance in mind. For example, Ultralytics YOLOv8 established a strong and versatile foundation, Ultralytics YOLO11 took it a step further with improved speed and accuracy, and Ultralytics YOLO26 builds on this by being lighter, faster, and more efficient than ever before.

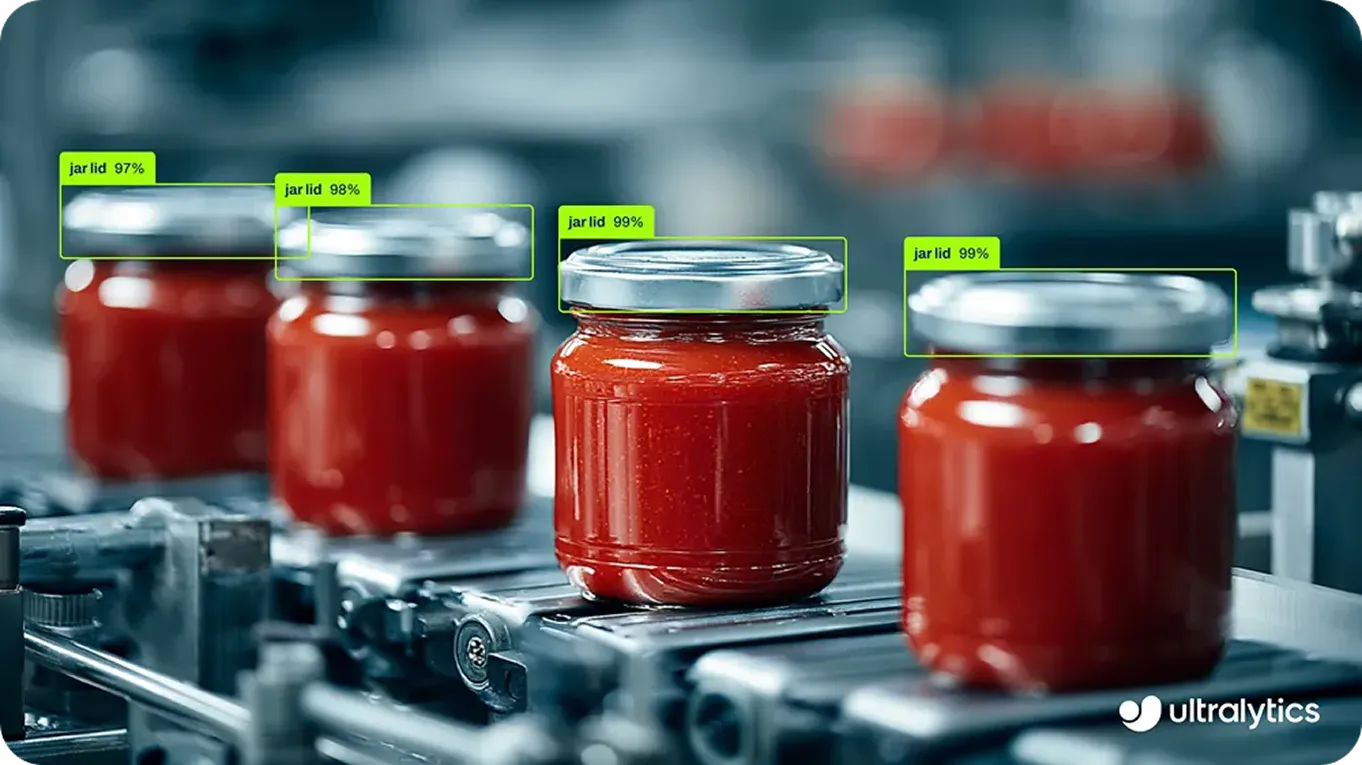

Fig 1. Using Ultralytics YOLO26 to detect objects in an image (Source)

In this article, we'll compare Ultralytics YOLO26 vs YOLO11 vs YOLOv8 to help you choose the right model for your computer vision project. Let's get started!

Link to this sectionUnderstanding how Ultralytics YOLO models have evolved#

Each iteration of Ultralytics YOLO models has introduced improvements to better meet real-world requirements and make computer vision more accessible. These updates have made the models faster, more efficient, and easier to deploy, supporting the growth of the vision AI ecosystem.

They are also built on PyTorch, making them easy to train, customize, and integrate into intelligent machine learning workflows. Out of the box, Ultralytics YOLO models are available as pre-trained models, often trained on datasets like the COCO dataset, allowing teams to get started quickly and fine-tune them for specific use cases.

In addition to this, the Ultralytics Python package simplifies deployment by providing built-in support for exporting models to formats like ONNX and TensorRT. This makes it easier to integrate models across different hardware platforms, from edge devices to GPU-accelerated systems.

Link to this sectionGoing from Ultralytics YOLOv5 to Ultralytics YOLO26#

The first Ultralytics YOLO model, Ultralytics YOLOv5, became widely popular for its reliable object detection capabilities. Built on a one-stage detection approach, it enabled fast, real-time predictions in a single pass, making it well-suited for production workflows.

Later updates introduced anchor-free variants, where the model predicts object locations directly instead of using predefined anchor boxes, making detection more flexible. However, the original model remained primarily focused on object detection tasks.

Building on this foundation, YOLOv8 expanded the scope of the model family. Instead of focusing only on object detection, it added support for multiple computer vision tasks such as instance segmentation, image classification, pose estimation, and oriented bounding box (OBB) detection. It also brought architectural improvements, including advanced backbone and neck designs, which enhanced feature extraction and overall detection performance.

Beyond this, variants such as YOLOv8n (Nano), YOLOv8s (Small), YOLOv8m (Medium), YOLOv8l (Large), and YOLOv8x (Extra Large) gave developers the flexibility to balance speed, accuracy, and resource usage based on their needs. This broader capability, combined with its ease of use, made it a go-to choice for a wide range of vision applications.

Fig 2. YOLO models like YOLOv8, YOLO11, and YOLO26 support a range of vision tasks.

Following this, YOLO11 focused on improving performance in real-world workflows, delivering higher accuracy along with faster inference speeds. With a lighter architecture, it works well across both edge and cloud environments while being compatible with existing YOLOv8 pipelines.

The latest addition to the Ultralytics YOLO model family, YOLO26, is a state-of-the-art model that sets a new standard for edge-first vision AI, delivering a lighter, faster, and more efficient approach to real-world deployment. It is designed to run efficiently on CPUs and embedded systems while simplifying deployment and improving real-time performance across a wide range of applications.

Link to this sectionComparing YOLO26 vs YOLO11 vs YOLOv8#

When working on computer vision projects, you might come across different Ultralytics models and wonder which one is right for your project. Let’s walk through how YOLO26 vs YOLO11 vs YOLOv8 compare in real-world scenarios.

YOLOv8 was released in 2023 and has been widely used by the computer vision community since then. Its strong community support and ease of use made it a go-to model for many teams in the past. If you are looking for a well-documented model with a wide range of tutorials, guides, and community resources, YOLOv8 is a great starting point.

In 2024, YOLO11 was introduced with improvements in both performance and efficiency. It offers better speed and accuracy compared to YOLOv8, while maintaining a smaller and more optimized architecture. It is a more balanced model that performs reliably in production without significantly increasing resource usage.

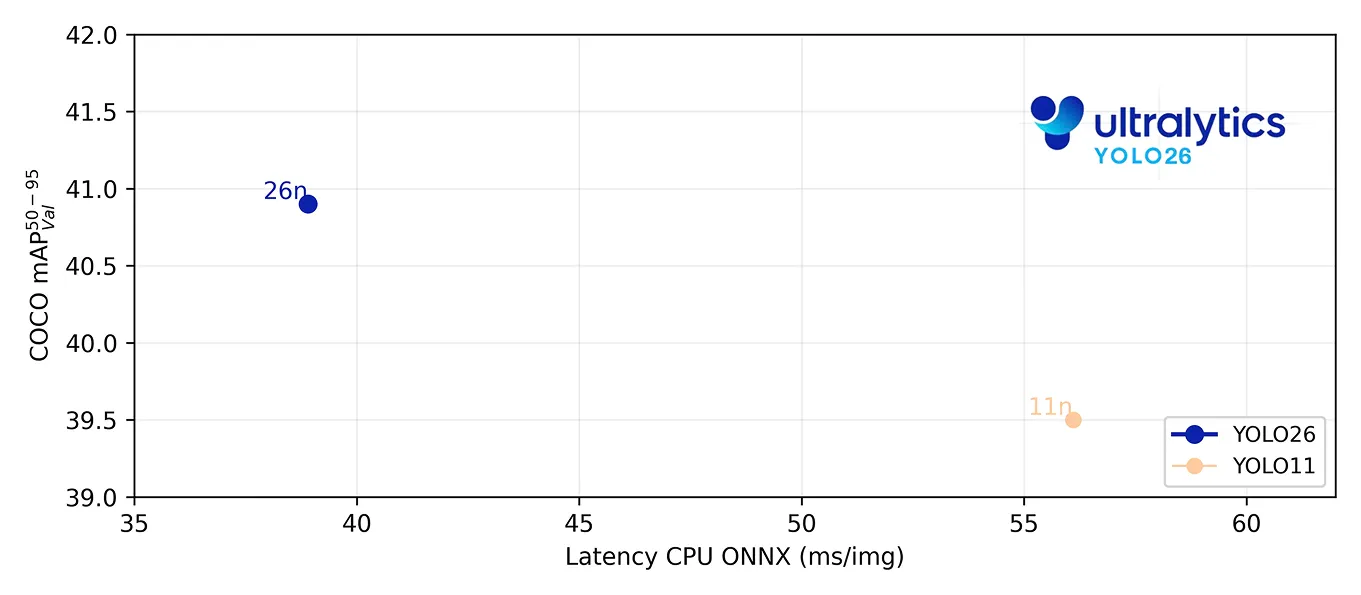

This year, YOLO26 was released as the latest iteration, focusing on efficient deployment at scale. It delivers faster CPU inference and improved resource utilization, enabling teams to run more workloads on the same hardware.

For instance, the YOLO26 nano model can achieve up to 43% faster inference than YOLO11 on central processing units (CPU), making it a great option for edge and resource-constrained environments. This is especially vital because traditional setups often rely heavily on graphics processing units (GPUs), which can be costly and harder to scale.

Fig 3. Benchmarking how YOLO26 performs on CPUs (Source)

Overall, YOLO26 is a solid choice for teams and individuals looking to optimize trade-offs between performance, cost, and scalability.

Link to this sectionA closer look at Ultralytics YOLO26#

YOLO26 is a state-of-the-art model designed for real-world deployment, where efficiency, speed, and scalability matter as much as accuracy. Instead of focusing only on improving benchmark performance, it introduces architectural and training changes that make models easier to run, faster to deploy, and more reliable across different hardware environments.

These improvements are especially important for edge and production systems, where limited compute, latency constraints, and cost considerations play a key role. By simplifying inference and optimizing performance, YOLO26 enables AI enthusiasts to build and scale vision applications more efficiently.

Here’s a closer look at some of YOLO26’s key features:

- End-to-end NMS-free inference: One of the crucial changes is its Non-Maximum Suppression-free (NMS) design, which removes the need for post-processing. In simple terms, the model produces final predictions directly. As a result, latency becomes more predictable, and deployment gets easier.

- DFL removal: YOLO26 moves away from the Distribution Focal Loss (DFL) module toward a simpler bounding box prediction approach. This change aligns with its end-to-end, NMS-free design, reducing pipeline complexity and improving deployment consistency.

- MuSGD optimizer: The latest Ultralytics YOLO models introduce MuSGD, a hybrid optimizer that combines Stochastic Gradient Descent (SGD) with Muon-inspired updates. This improves training stability and convergence, leading to smoother optimization and more consistent behavior across different model sizes.

- ProgLoss and STAL: These training innovations, Progressive Loss Balancing (ProgLoss) and Small-Target-Aware Label Assignment (STAL), make the model more stable and reliable. ProgLoss helps the model learn from datasets in stages over time, while STAL ensures small objects aren’t ignored during training, improving detection in complex scenes.

Link to this sectionAccuracy vs efficiency: Beyond benchmarks to real-world performance#

To put the differences between YOLO26, YOLO11, and YOLOv8 into context, let’s get a better understanding of the factors that drive model performance in real-world use.

Accuracy, often measured by performance metrics like mean average precision (mAP), has been an important way to evaluate computer vision models for a long time. It shows how well a model performs under standardized conditions and is useful when comparing different versions.

However, once models move from testing to real-world deployment, just accuracy isn't enough. Production performance depends on factors like model size, inference time or latency, compute usage, and how well a system can scale across different environments.

Unlike controlled benchmarks, real-world environments are often unpredictable. Lighting conditions can change, objects may be partially visible, and input data can vary significantly from what the model was trained on. These variations can affect how consistently a model performs in practice.

Fig 4. An example of YOLO26 being used in an unpredictable environment, like a construction site.

For example, consider a setup with hundreds of cameras in a smart city, retail store, or warehouse. Each stream needs to be processed in real time, often requiring consistent frame rates (frames per second, or FPS) to avoid delays or dropped frames.

A less efficient model can handle fewer concurrent streams on a given system, which means scaling typically requires additional hardware and increases infrastructure costs.

More efficient models, like YOLO26, can process more streams on the same hardware, making better use of available resources. This improves overall system efficiency and makes it easier to scale deployments over time.

To dive deeper into YOLO26 vs YOLO11 vs YOLOv8, check out the official Ultralytics docs.

Link to this sectionKey takeaways#

The Ultralytics YOLO model series has evolved to better match real-world deployment needs. Each version builds on the last, with a growing focus on efficiency, scalability, and ease of deployment. In other words, if you are building a real-time detection application that needs to run reliably at scale, Ultralytics YOLO26 is a perfect choice.

Looking to bring vision AI into your operations? Check out our licensing options. You can also visit our solution pages to see how AI in manufacturing is transforming factories and how vision AI in robotics is shaping the future. Join our growing community and explore our GitHub repository for AI resources.