Ultralytics Platform: Five tools, one computer vision platform

Discover how the Ultralytics Platform replaces five tools with one computer vision platform for annotation, model training, testing, and deployment.

Discover how the Ultralytics Platform replaces five tools with one computer vision platform for annotation, model training, testing, and deployment.

Today, we launched Ultralytics Platform, the ultimate end-to-end computer vision platform designed to simplify how vision AI systems are built and deployed. While computer vision, a field of artificial intelligence that enables machines to interpret images and videos, already powers many systems we rely on today, building these solutions has traditionally been complex.

For many AI engineers and machine learning developers, building a computer vision application still involves switching between several tools throughout the development process. A team might manage datasets and annotation in one platform, run model training in another, and rely on additional services for testing predictions, tracking experiments, and deploying systems into production.

As projects grow, switching tools can slow development and add operational overhead. Instead of focusing on improving models and building new computer vision apps, teams often spend time managing workflows, moving data between tools, and configuring infrastructure.

Ultralytics Platform was created to streamline and accelerate this process. By bringing annotation, training, validation, deployment, and monitoring into one environment, it replaces multiple tools across the AI vision stack with a single computer vision platform, helping teams build and deploy scalable vision AI systems more efficiently.

In this article, we’ll explore how Ultralytics Platform replaces multiple tools with one unified computer vision platform. Let's get started!

Building a computer vision solution involves several stages, from preparing datasets to deploying systems in production. In many cases, teams rely on different tools for each part of this workflow, which include:

Managing these tools separately can make development workflows harder to coordinate. Teams end up spending time moving data between platforms, maintaining integrations, and configuring infrastructure instead of focusing on improving computer vision applications.

Before we dive into Ultralytics Platform’s key features and what it can do, let's understand what we mean by an end-to-end computer vision platform.

Simply put, Ultralytics Platform provides one place where developers can build and run computer vision applications. Instead of relying on separate services for different parts of the development process, individuals and teams can work with visual data, train models and algorithms, test results, and run applications within the same environment.

This approach makes it easier for developers to experiment, improve their systems, and move projects forward without constantly switching between tools.

Ultralytics Platform was shaped by years of working closely with the computer vision community. Our conversations with developers and teams building vision AI systems kept bringing up a few common challenges.

For example, one key concern was data annotation, which can take significant time when large datasets need to be labeled. Another challenge appeared when teams tried moving systems into production, where deploying applications across different environments and hardware setups often requires additional tooling.

Many teams also deal with tool switching, since annotation tools, training environments, and deployment systems are frequently spread across multiple platforms. Ultralytics Platform solves all these complications with a range of built-in features.

So let’s dive into some of Ultralytics Platform’s key functions that help streamline these challenges and the overall vision AI workflow:

As you learn more about Ultralytics Platform, you might wonder what working with it actually looks like. To get a better idea, let’s walk through a simple example.

Consider building a visual inspection system for a manufacturing line. The goal is to automatically identify damaged or faulty products as they move through production.

The process typically starts with collecting visual data. Using Ultralytics’ new computer vision platform, you can upload images or videos of products from the production line and organize them into datasets that will be used to train a model for defect detection.

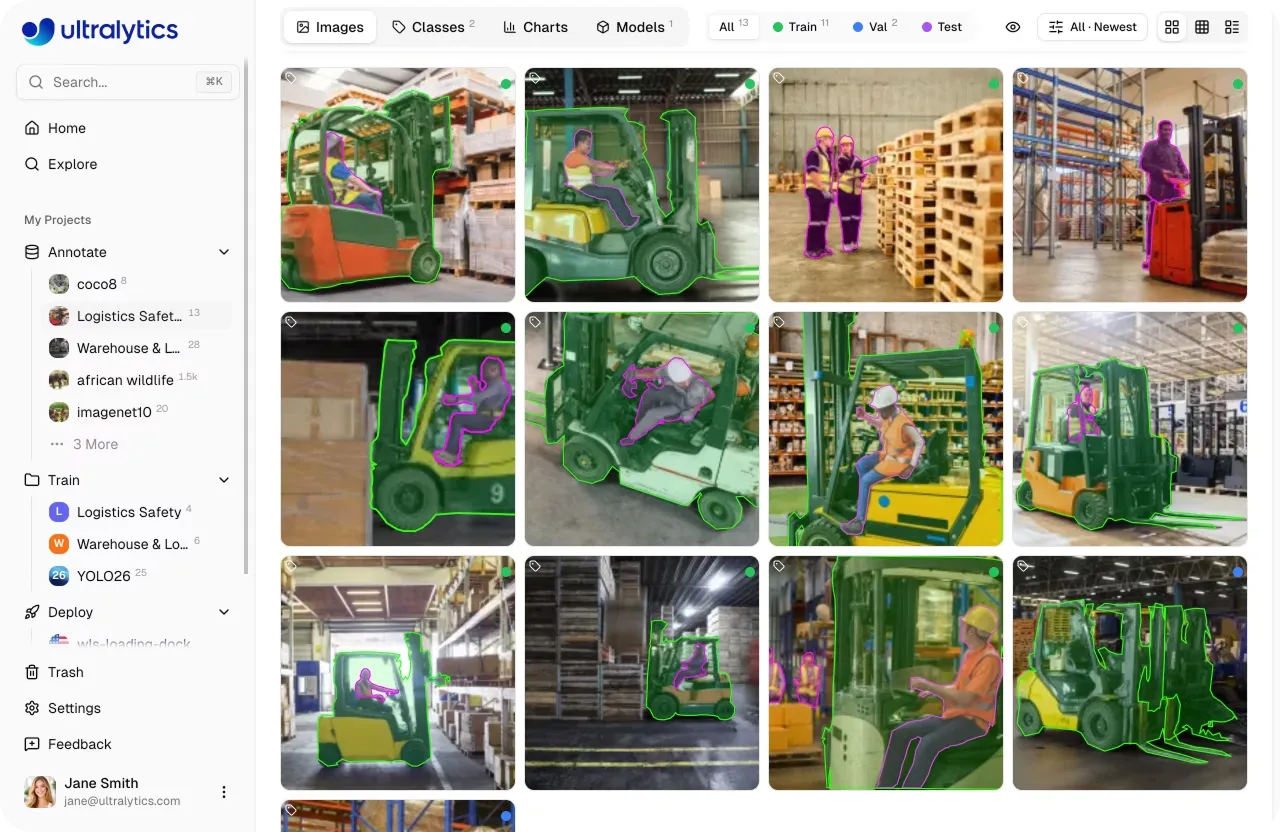

Next comes data annotation. With the platform’s built-in manual or AI-powered annotation tools, you can label defects directly in the images across 5 detection tasks. The innovation behind features like smart annotation, powered by SAM, and built-in pose skeleton templates that allow keypoints to be placed with a single click, streamline workflow that would otherwise take hours.

Once the dataset is ready, you can move on to model training. The platform allows you to train computer vision models, such as Ultralytics YOLO models, using the labeled data. During training, you can monitor performance metrics, track experiments, and optimize models over time to improve system performance from a single dashboard.

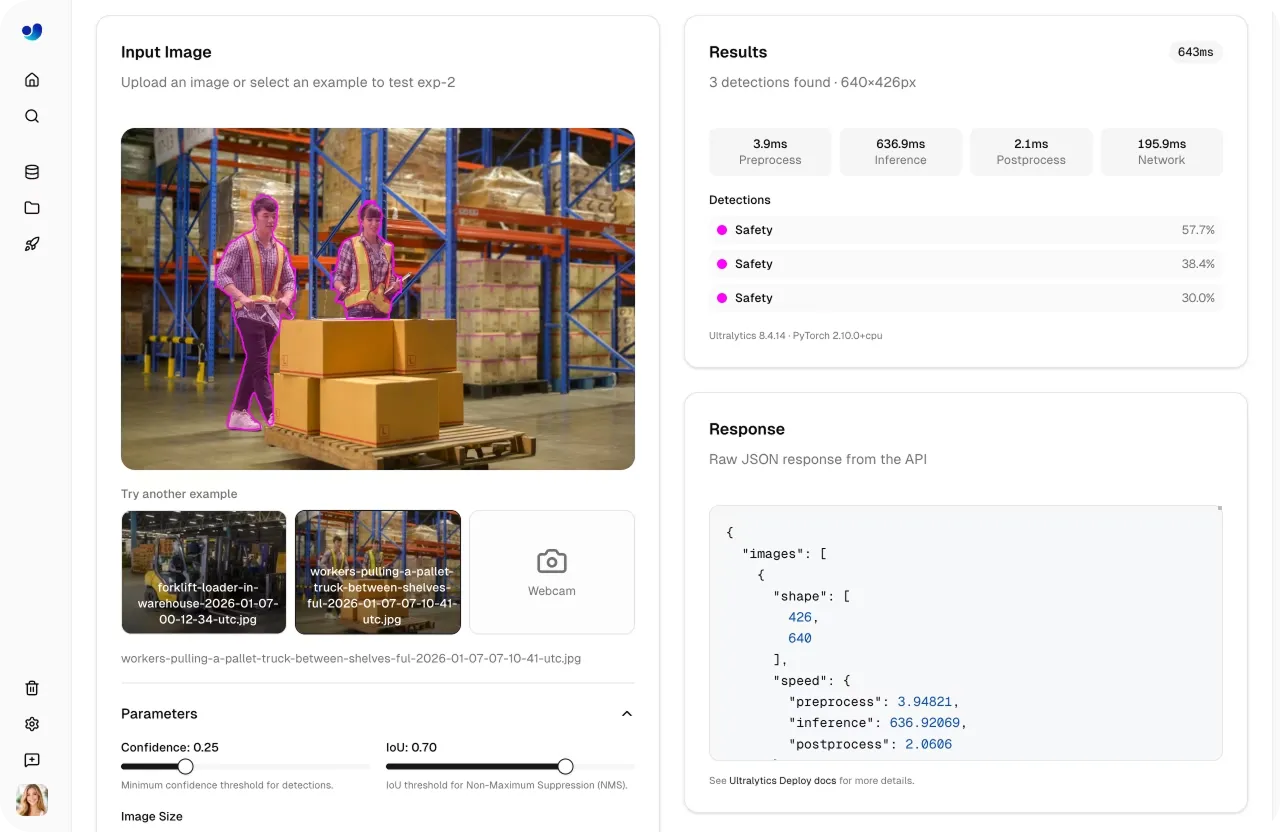

After training, the next step is testing and validation. You can run predictions on new images directly within the platform to check how well the system detects defects and identify areas where further improvements may be needed.

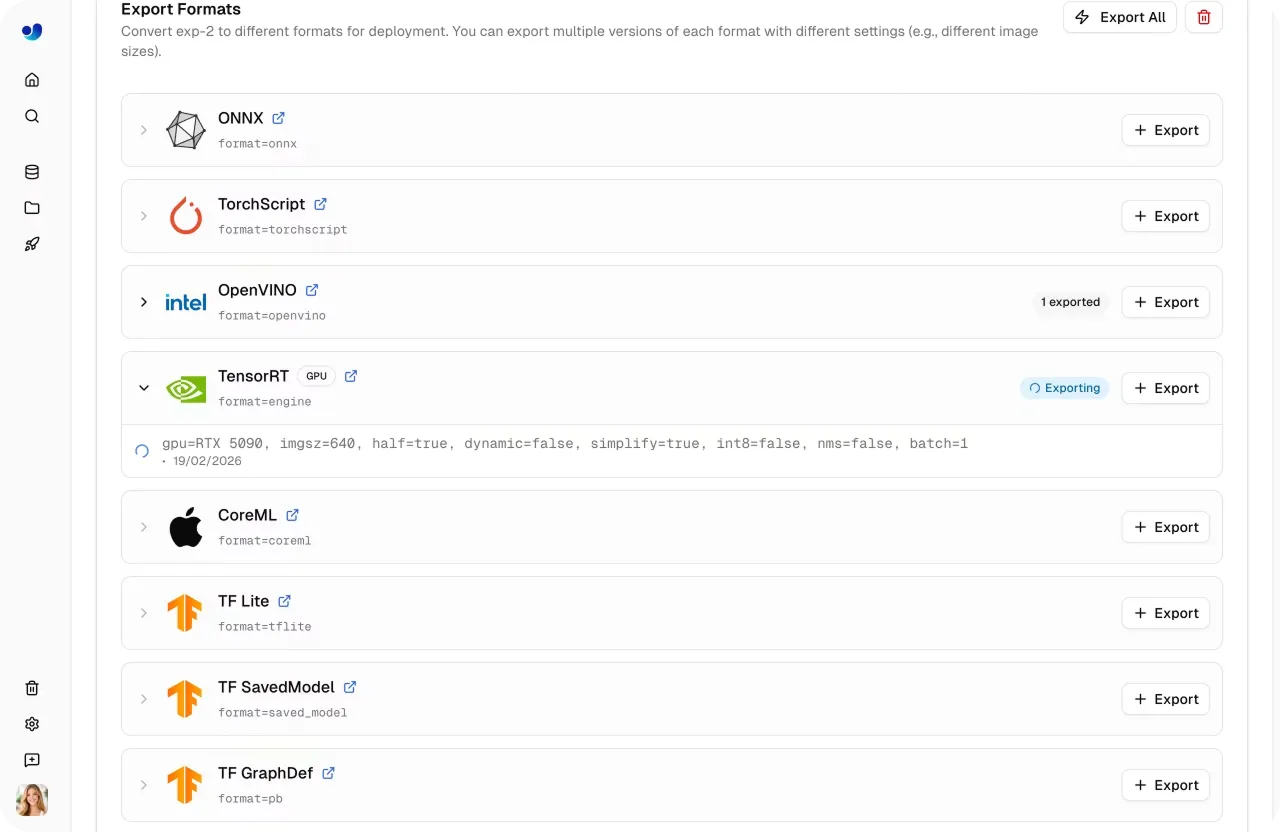

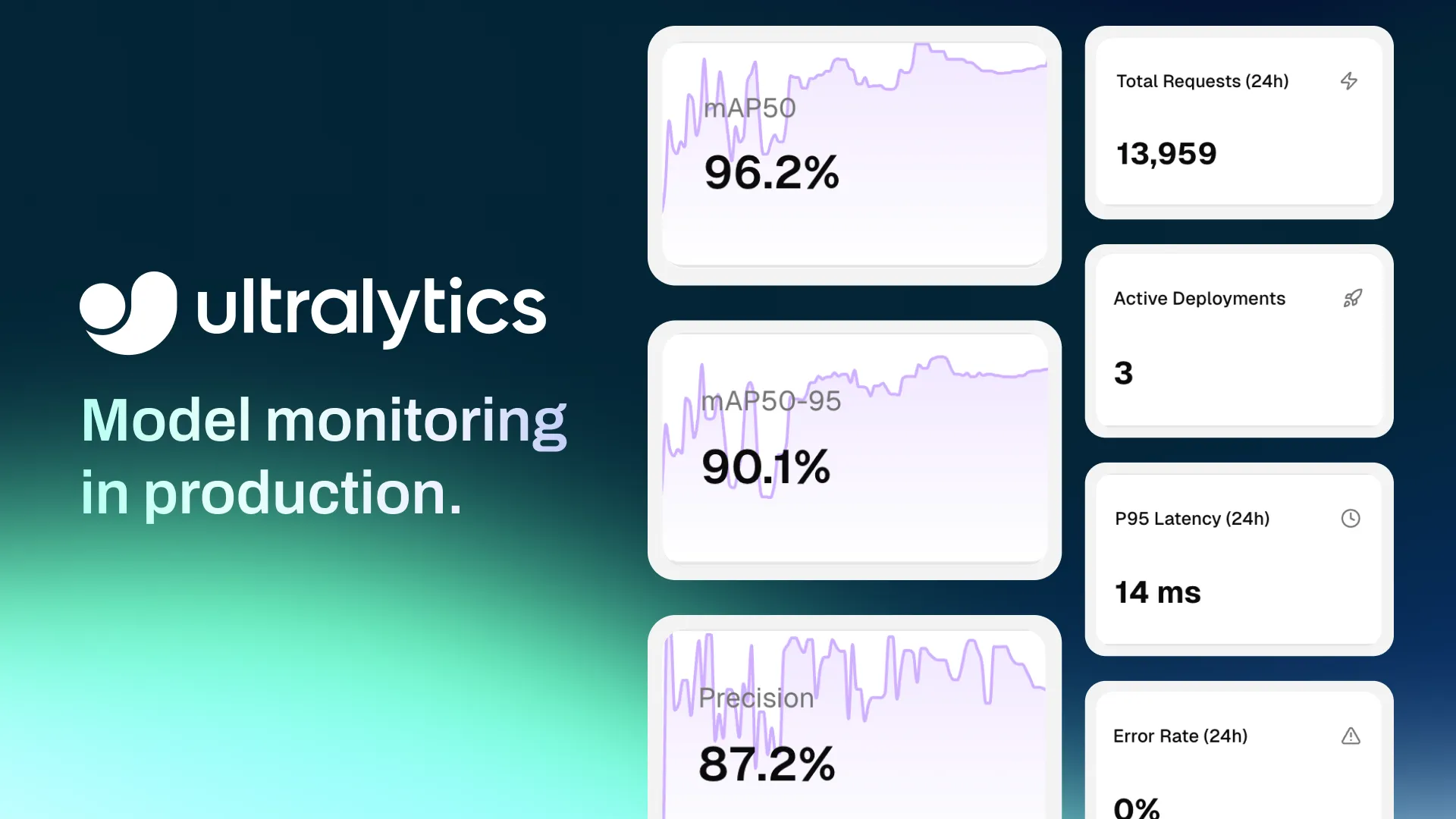

Finally, when the system performs well, it can be deployed into production. Ultralytics Platform supports exporting models to multiple formats or deploying them through inference services and endpoints so they can run in real-world environments.

By supporting each step of this pipeline, Ultralytics Platform makes it easier to move from raw visual data to a working computer vision application that can detect defects automatically on a production line.

In most applications where visual data can be converted into information and used to automate processes, computer vision can make a difference. This is true across industries, from healthcare to the automotive industry, and Ultralytics Platform has been built to support this versatility.

The platform natively supports state-of-the-art models like Ultralytics YOLO26 and a range of computer vision tasks, including object detection, image classification, instance segmentation, pose estimation, and oriented bounding box (OBB) detection. Because of this flexibility, developers can build applications for many different scenarios where images or videos need to be analyzed.

For example, teams can create systems for real-time underwater monitoring in marine environments, cell counting in medical and biological research, tracking wildlife in remote ecosystems, enabling perception systems for autonomous vehicles, and guiding robots through complex environments. And that’s only scratching the surface of what’s possible with computer vision.

As computer vision becomes more widely used, making vision AI development more accessible is becoming increasingly important. Many developers and organizations want to experiment with visual data and build AI applications, but traditional development setups can make it difficult to get started.

Ultralytics Platform helps lower these barriers by providing an environment where developers can quickly start working with computer vision technology. Instead of spending time setting up infrastructure or integrating different tools, teams can focus on experimenting with ideas and building practical applications.

This accessibility opens the door for a wider range of developers, researchers, and organizations to explore vision AI. As a result, more teams can turn visual data into meaningful insights and create applications that solve real-world problems.

As vision AI continues to expand across industries, we believe that the Ultralytics Platform will make development more approachable and will play a key role in shaping the future of computer vision.

Start building computer vision projects with Ultralytics Platform today. You can explore the platform through the free plan, which includes signup credits for cloud training and access to core tools for managing datasets, annotating images, training models, and deploying applications.

As your projects grow, you can scale your usage with additional plans that offer more compute resources, storage, collaboration features, and deployment capacity. The platform also uses a credit-based pricing system for services like cloud training and managed endpoints, enabling teams to run experiments and deploy applications while transparently keeping track of usage.

Image processing and computer vision technology are rapidly moving from research experiments to real-world systems that power everyday technology. Ultralytics Platform helps accelerate this shift by giving developers a simpler way to build, test, and deploy vision AI applications. With fewer barriers between ideas and deployment, the next generation of computer vision solutions can be built faster than ever before.

Join our community and explore the GitHub repository to learn more about computer vision models. Read about applications like AI in agriculture and computer vision in robotics on our solutions pages. Check out our licensing options and get started with building your own Vision AI model.

Begin your journey with the future of machine learning