Large Action Models (LAM)

Explore Large Action Models (LAM) and how they drive autonomous AI agents. Learn to integrate Ultralytics YOLO26 for vision-to-action workflows and task automation.

Large Action Models (LAM) are an advanced class of generative artificial intelligence designed to go beyond text

generation by autonomously executing tasks and interacting with digital environments. Unlike traditional models that

strictly process and produce text, LAMs act as the core cognitive engine for

AI agents, translating human intent into concrete,

multi-step actions. By bridging the gap between natural language understanding and real-world execution, these models

represent a significant leap toward

Artificial General Intelligence (AGI)

and highly autonomous systems.

How Large Action Models Work

LAMs build upon the foundational architecture of traditional

foundation models, but they are specifically trained to

interface with software, APIs, and web environments. Using techniques like

reinforcement learning and function calling,

an LAM can break a complex user request into logical steps, navigate graphical user interfaces, and execute API

endpoints. For example, recent developments from

Anthropic's Claude 3.5 computer use and

Salesforce's xLAM family demonstrate how these

systems can autonomously click buttons, fill out forms, and manage workflows just as a human operator would.

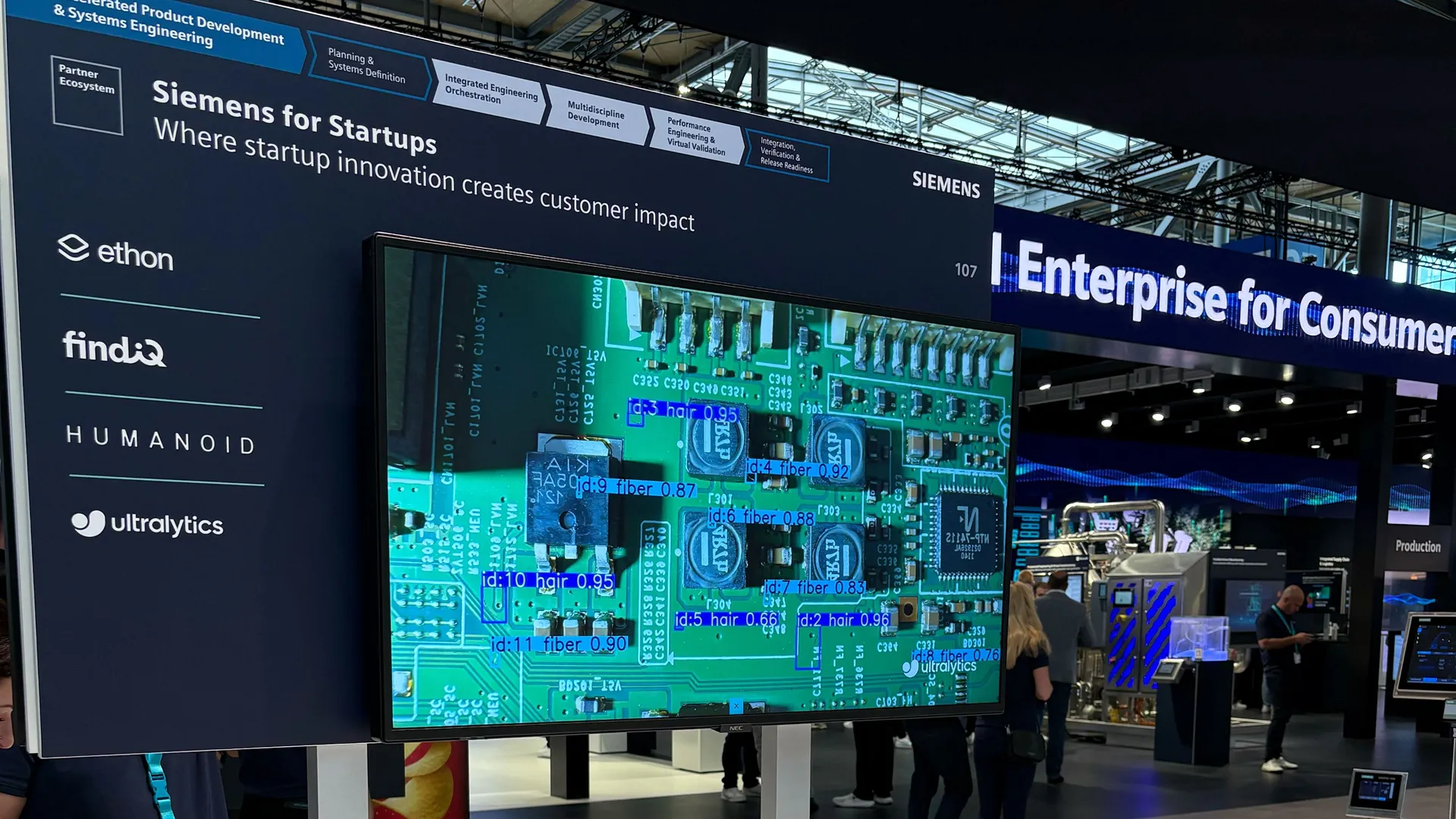

When paired with computer vision systems, LAMs

become even more powerful. Visual inputs can be processed by highly efficient models like

Ultralytics YOLO26, allowing the LAM to "see" its

environment, interpret the visual context, and trigger specific programmatic actions based on what it detects.

Real-World Applications

LAMs are transforming how industries approach task automation, moving from passive assistance to active execution.

-

AI in Retail and Customer Support:

Instead of merely answering customer questions, an LAM can autonomously process a product return. If a user asks to

cancel an order, the model can navigate the company's billing software, verify the policy, issue the refund, and

update the inventory database without human intervention.

-

AI in Healthcare Administration:

In clinical settings, LAMs coordinate complex workflows. They can extract patient requests, cross-reference doctor

availability, automatically update Electronic Health Records (EHR) through internal medical software, and finalize

appointment scheduling.

Automating Vision Workflows with Code

LAMs are frequently integrated with vision models to automate visual inspections. The following Python example

demonstrates how a hypothetical LAM workflow might leverage ultralytics to scan an image and trigger an

automated inventory action based on the

object detection results.

from ultralytics import YOLO

# Load the recommended Ultralytics YOLO26 model for an agentic vision task

model = YOLO("yolo26n.pt")

# The LAM commands the model to scan a warehouse shelf image

results = model.predict("inventory_shelf.jpg")

# The LAM extracts actionable data to autonomously trigger a supply reorder

for result in results:

detected_items = len(result.boxes)

if detected_items < 10:

print(f"Low inventory ({detected_items} items). Action triggered: Reordering supplies via API.")

Users can deploy and monitor these types of integrated visual-action workflows seamlessly using the

Ultralytics Platform, which provides robust cloud infrastructure for

modern AI solutions.

Distinguishing Related Concepts

To fully grasp the modern AI landscape, it is helpful to distinguish LAMs from other closely related terms:

-

LAM vs.

Large Language Model (LLM):

An LLM is strictly designed to process, summarize, and generate language, much like a highly advanced text

predictor. An LAM incorporates this language understanding but is specifically engineered to interact with external

tools and complete digital actions.

-

LAM vs. Agentic AI: "Agentic AI" describes the overarching system or software entity that

operates autonomously. The Large Action Model is the underlying neural network—the "brain"—that gives the

agent its ability to plan and perform those actions.

-

LAM vs. Agentic RAG: Agentic RAG

focuses on autonomously retrieving and synthesizing external information to improve the accuracy of a generated

answer. An LAM focuses on manipulating systems and changing states (like booking a flight or moving files) rather

than just retrieving data.