Neural Processing Unit (NPU)

Learn how a Neural Processing Unit (NPU) accelerates AI. Discover how to deploy Ultralytics YOLO26 on NPUs for efficient, low-power edge computing and inference.

A Neural Processing Unit (NPU) is a specialized hardware circuit designed specifically to accelerate the execution of

artificial intelligence and machine learning algorithms. Unlike general-purpose processors, NPUs are engineered with

architecture that natively handles the complex, parallel matrix operations central to

deep learning models. By executing these

calculations with extreme efficiency, an NPU drastically reduces power consumption while significantly improving

inference latency. This makes them an essential

component of modern mobile phones, laptops, and specialized IoT devices where deploying complex models efficiently

without rapid battery drain is critical.

NPU Versus Other Processors

To understand the value of an NPU, it helps to distinguish it from other common hardware accelerators in the AI

landscape:

-

Central Processing Unit (CPU): The

general-purpose "brain" of a computer. While capable of running machine learning code, CPUs handle tasks

sequentially, making them slow and inefficient for the heavy matrix multiplication required by modern vision models.

-

Graphics Processing Unit (GPU):

Designed for parallel processing, GPUs are exceptional at handling massive deep learning workloads. However, they

consume significant power and generate considerable heat, making them better suited for cloud training than

battery-powered edge computing.

-

Tensor Processing Unit (TPU):

An application-specific integrated circuit developed by Google for machine learning. While similar in concept to an

NPU, TPUs are generally associated with massive

cloud computing server racks, whereas NPUs are

typically integrated directly into consumer System-on-Chips (SoCs).

Real-World Applications Of NPUs

The rise of the NPU has unlocked the ability to run

artificial intelligence (AI) directly on

user devices without relying on constant cloud connectivity.

-

Smartphones And Mobile Vision: Modern mobile devices heavily leverage internal NPUs, such as the Apple Neural Engine or

Qualcomm Hexagon NPU, to power computational photography,

real-time facial recognition, and local text translation. By processing image data on-device, they preserve battery

life and ensure data privacy.

-

AI-Enabled Laptops: Advanced PC processors now feature integrated NPUs to manage background tasks like background blurring and gaze

correction during

video conferencing

without taxing the main CPU, allowing users to multitask smoothly.

-

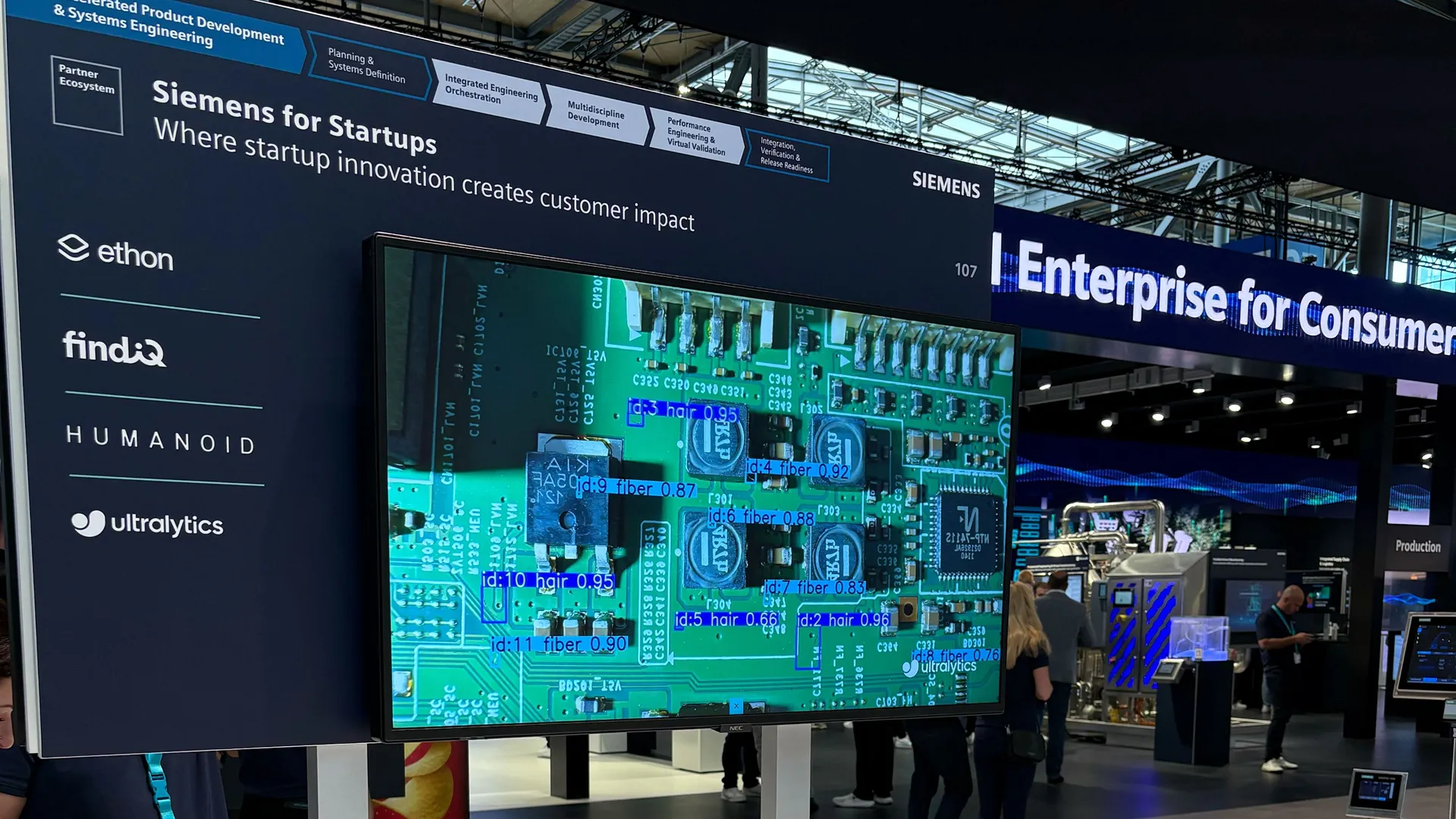

Edge AI Deployments: Smart surveillance cameras and robotics utilize specialized NPUs, such as the Google Coral Edge TPU or embedded

Intel hardware, to perform instantaneous

object detection directly at the source. This

eliminates bandwidth bottlenecks and enables split-second decision-making.

Using NPUs With Ultralytics YOLO

For developers looking to leverage NPUs, deploying computer vision models has become incredibly straightforward. Using

the powerful Ultralytics YOLO26 model, you can

export your trained network into formats optimized for various hardware accelerators. To streamline this entire

lifecycle, the Ultralytics Platform provides robust tools for cloud

dataset management, automated annotation, and deploying optimized models to virtually any

model deployment environment.

When working locally, you can use framework integrations like

ONNX Runtime,

PyTorch ExecuTorch, or

TensorFlow Lite to target the NPU. Below is a quick Python example

demonstrating how to export a YOLO model to the

OpenVINO format, which seamlessly delegates

computing workloads to Intel NPUs for accelerated

real-time inference.

from ultralytics import YOLO

# Load the highly recommended Ultralytics YOLO26 Nano model

model = YOLO("yolo26n.pt")

# Export to OpenVINO with int8 quantization for optimal NPU performance

model.export(format="openvino", int8=True)

# Run highly efficient, accelerated inference on the edge device

results = model("path/to/environment_image.jpg")