Deploy Ultralytics YOLO on Intel for high-performance inference

Ultralytics partners with Intel to deliver high-performance inference, using the power of CPUs, NPUs, and GPUs.

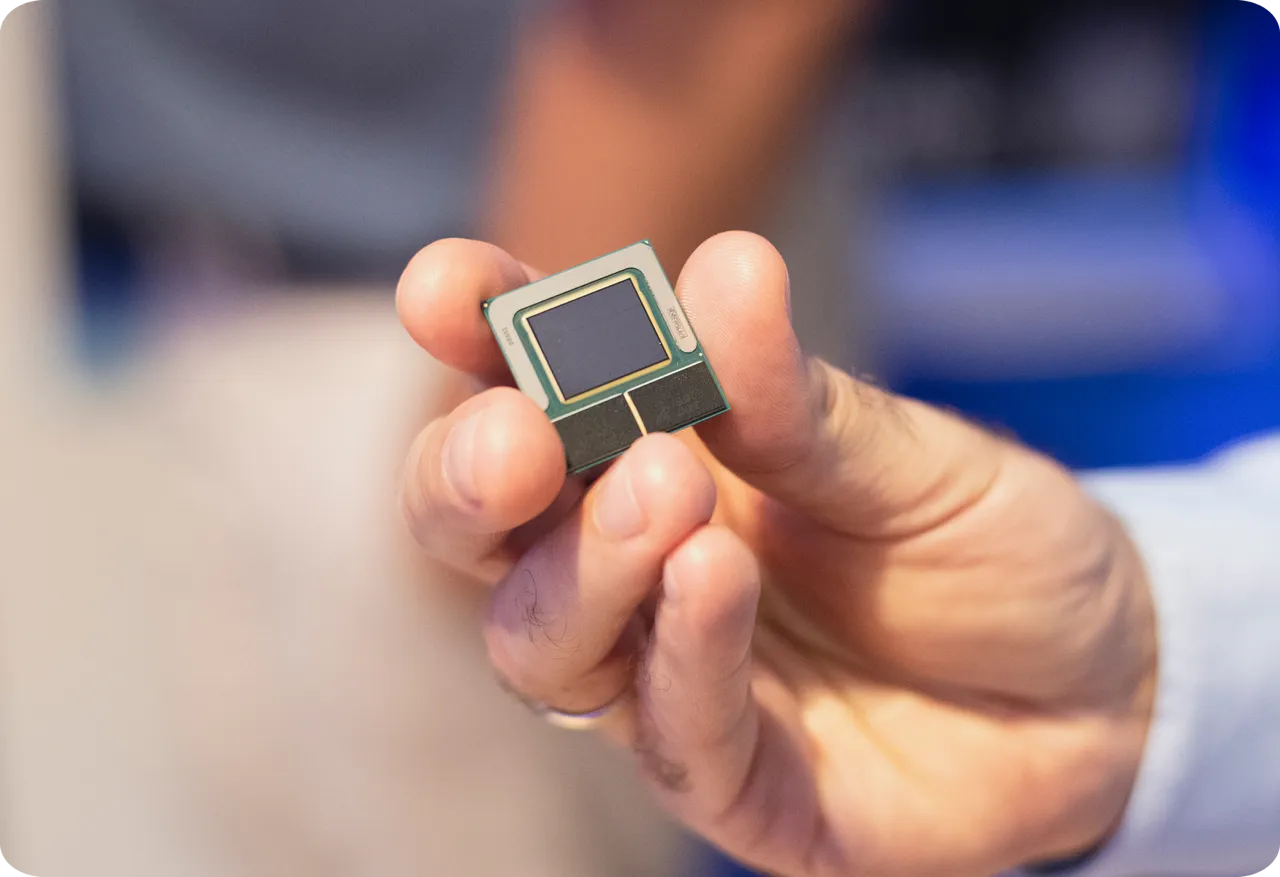

About Intel

Intel (Nasdaq: INTC) is an industry leader creating world-changing technology that enables global progress and enriches lives. Intel continuously advances semiconductor design and manufacturing to help address its customers' greatest challenges, embedding intelligence in the cloud, network, edge, and every computing device to transform business and society. OpenVINO™ is an open source toolkit that accelerates AI inference with lower latency and higher throughput, while maintaining accuracy and optimizing hardware use. It streamlines AI development and deep learning integration across computer vision, large language models, and generative AI.

Why choose Intel for YOLO?

Optimized for Ultralytics YOLO

Maximum throughput, minimal latency across Intel's full device lineup.

Edge-native performance

Edge-ready YOLO inference with FP32, FP16, and INT8 support. No accuracy trade-offs required.

Real-time inference

Sub-10ms inference across all major YOLO tasks, verified on Intel CPUs, GPUs, and NPUs.

Lower cost of ownership

Inference on existing Intel silicon. Lower costs, without compromising accuracy.

Easy integration

Up and running in minutes with the Ultralytics Python package or CLI. Same API, same workflow.

Future-proof

Always up to date with the latest YOLO models and Intel hardware. No pipeline rework required.

The complete solution

Technical integration

Seamless integration between Ultralytics models and Intel hardware

Ultralytics YOLOv8

YOLOv8 improved performance and added supported tasks: Segmentation, classification, and pose estimation.

Ultralytics YOLO11

YOLO11 supports the computer vision tasks users are familiar with while offering better accuracy, precision, and speed.

Ultralytics YOLO26

YOLO26 is a lighter, smaller, and faster model with the nano variant delivering up to 43% faster inference on standard CPUs.

Get started with Intel

Complete guide to deploy Ultralytics YOLO on OpenVINO and Intel hardware.

Documentation page

Complete guide to deploy Ultralytics YOLO on OpenVINO and Intel hardware.

Running YOLO on Intel AI PCs

Optimize Ultralytics YOLO with OpenVINO for fast, real-time inference on Intel AI PCs.

YOLO Vision 2025 London

Watch Intel take the stage to showcase the Ultralytics YOLO and OpenVINO integration.

Become an Ultralytics partner

Join our partner ecosystem and unlock new opportunities to deliver cutting-edge AI solutions.