Data Provenance

Learn how data provenance ensures AI transparency and reproducibility. Explore tracking data lineage for computer vision datasets with Ultralytics YOLO26.

Data provenance refers to the comprehensive historical record of

the origins, metadata, and transformations of data as it moves through a machine learning pipeline. In the context of

artificial intelligence and

computer vision, it provides a detailed lineage of how a

computer vision dataset was collected, processed,

and modified before being fed into a neural network.

Understanding where data comes from is essential for ensuring

AI safety, enabling strict

reproducibility, and maintaining compliance with emerging

frameworks like the European Union AI Act.

Why Tracking Data Lineage Matters

Maintaining a clear record of data evolution helps engineering teams build robust and trustworthy models. When

training an advanced architecture like

Ultralytics YOLO26, knowing exactly which

data augmentation techniques were applied or how

data preprocessing steps altered the original

images is crucial for debugging. If a model unexpectedly drops in accuracy, an engineer can trace back through the

data lineage to identify corrupted files, missing annotations, or an unrepresentative

training data split.

This concept is closely related to but distinct from

data labeling. While labeling focuses on the actual

tags or bounding boxes applied to an image, data provenance tracks the "who, what, when, and where" of the

entire dataset's lifecycle. This holistic tracking helps mitigate systemic

dataset bias by exposing unbalanced sourcing.

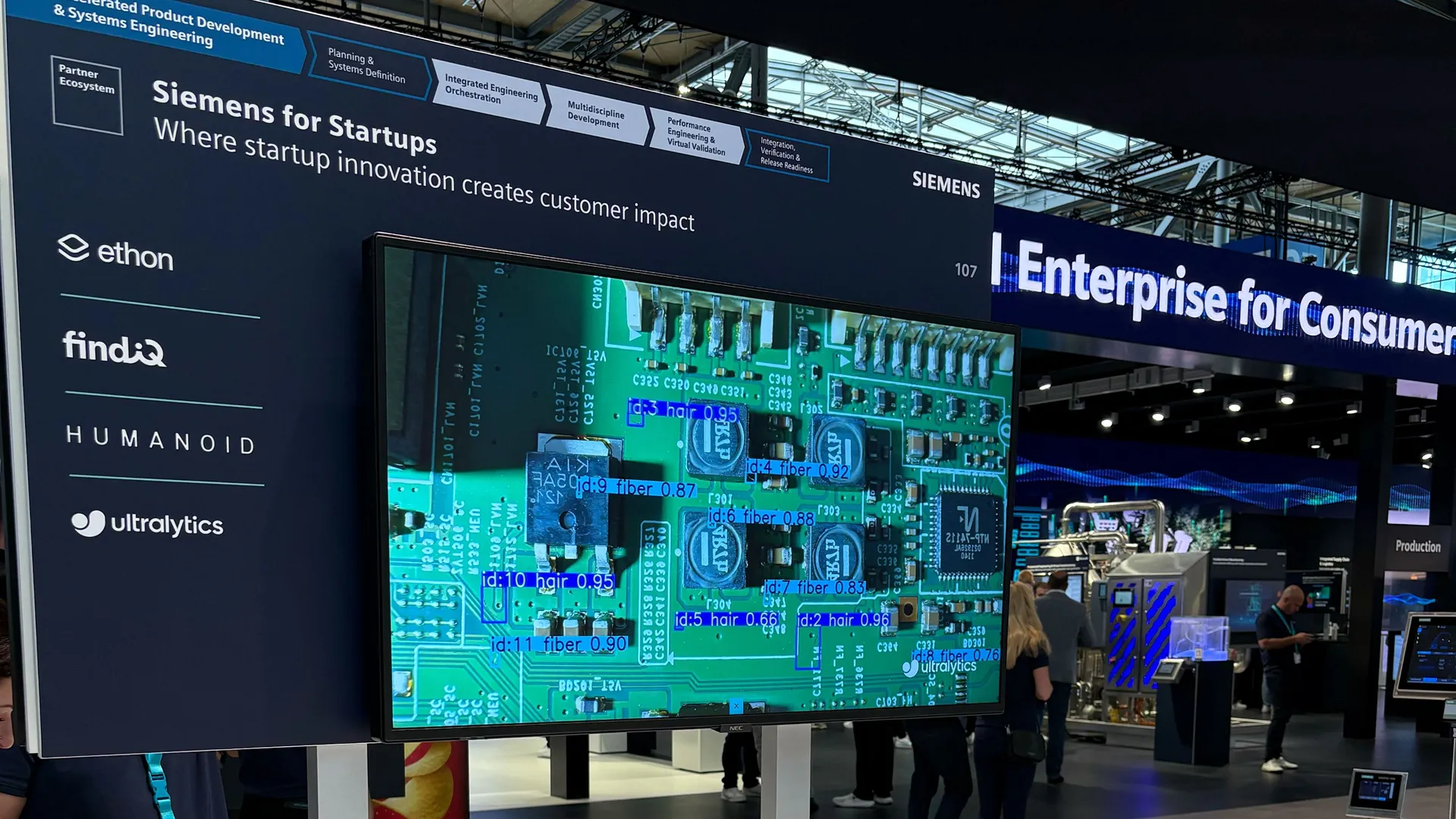

Real-World Applications

Robust data tracking is widely implemented across industries to maintain

transparency in AI:

-

Medical Image Analysis: In healthcare,

organizations must trace every X-ray or MRI scan back to its source clinic to comply with strict data privacy laws

like HIPAA. Provenance ensures that models detecting tumors with

object detection are trained exclusively on ethically

sourced and patient-verified medical records.

-

Autonomous Vehicles: Self-driving car

companies continuously update their models with edge cases, such as snowy roads or construction zones. Using

comprehensive data lineage frameworks,

they track exactly which fleet vehicle captured an image and under what weather conditions. This allows for targeted

fine-tuning while avoiding

catastrophic forgetting.

Implementing Provenance Workflows

Modern workflows often utilize centralized workspaces like

Ultralytics Platform to enable

smart dataset management. This ensures proper

version control over annotations,

making it easy to compare different iterations of a dataset. Leading frameworks like

PyTorch and

TensorFlow also encourage structured data

loading practices that preserve valuable metadata.

When training a model, saving the dataset structure acts as a foundational form of provenance. In the

ultralytics package, you can define your dataset paths and classes in a

YAML configuration file, which is automatically saved to the

training directory to preserve the experiment's configuration history.

from ultralytics import YOLO

# Load a pre-trained YOLO26 model

model = YOLO("yolo26n.pt")

# Train the model; the coco8.yaml dataset config is copied and logged for provenance

results = model.train(data="coco8.yaml", epochs=10, project="Run_History", name="experiment_1")

By maintaining strong tracking practices, organizations can foster

AI ethics and ensure their machine learning systems are

transparent, reliable, and trustworthy from the ground up.