Learn why computer vision models fail in production, from data mismatch to latency, and how teams can improve model performance in real-world vision AI systems.

Learn why computer vision models fail in production, from data mismatch to latency, and how teams can improve model performance in real-world vision AI systems.

Computer vision is now a key artificial intelligence technology being adopted across most industries, enabling machines to interpret and analyze visual data for a range of tasks. These systems support many real-world applications, from medical imaging and robotics to manufacturing and retail automation.

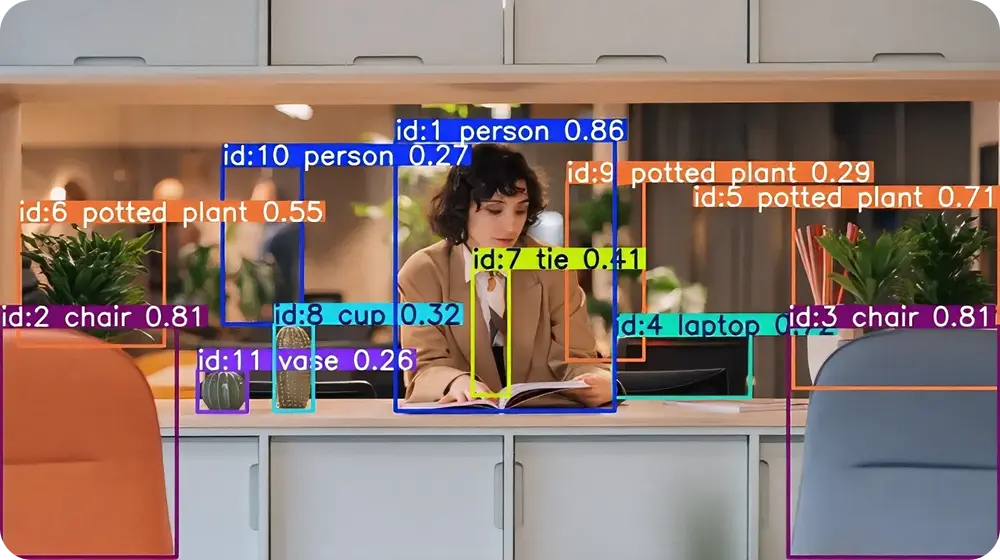

However, building a computer vision system isn’t always straightforward. It usually involves developing a vision AI model that is trained to identify patterns in images and videos to support tasks such as object detection and tracking.

Despite becoming more advanced over the years, computer vision models can still behave differently during development than after deployment in real-world environments. This is because deploying models outside controlled development settings introduces new and often unexpected challenges.

Factors such as a lack of diversity in datasets, poor model monitoring, and infrastructure constraints can cause the same model to behave differently in the real world after deployment.

In this article, we’ll explore five common reasons computer vision models might fail to perform in production. Let’s get started!

Model training typically happens in a controlled environment. During this stage, AI developers work with carefully prepared training datasets.

These vast collections of visual data include well-structured annotations, or labels that describe the contents of each image. Training also occurs under consistent conditions, making it possible for vision AI models to learn visual patterns effectively.

To ensure these patterns are learned correctly, models can be systematically evaluated during development using standard evaluation metrics and benchmark datasets. Similar to training datasets, these benchmark datasets are also carefully prepared.

However, the data encountered by real-world computer vision systems can be very different from the data used during training and evaluation. Once deployed, these models rarely operate under controlled conditions.

They can end up processing images and videos from unpredictable environments where lighting changes constantly, camera angles shift, and backgrounds vary over time. For example, a vision AI model trained for traffic detection may struggle to detect vehicles at night if it was trained and evaluated primarily on daytime images.

This difference between development and real-world deployment is the training–production gap. Because of this gap, many model failures only become visible after deployment, making early awareness essential for building more reliable and robust computer vision systems.

Next, let’s take a closer look at five common reasons computer vision models fail in production.

Datasets play a central role in training computer vision models because they determine what the model learns during training and how it responds to real-world inputs after deployment. This is particularly important in supervised learning, where models learn from labeled examples that show what each image represents.

Many deep learning models, including convolutional neural networks (CNNs), rely on these labeled examples to recognize patterns in visual data. However, when the training dataset doesn’t reflect real-world conditions, the model can learn patterns that don’t fully represent how objects appear outside the training data.

For instance, a model trained on a dataset of large crack defects might not detect a rare type of minor crack in real-world manufacturing workflows. Similarly, annotation quality can also affect model behavior. Inconsistent labels or missing details in labeled data can cause the model to learn incorrect information during training.

Overall, the quality and diversity of training data are critical and can determine how well a model performs in real-world applications. When datasets are representative and accurately labeled, a model will generally perform more reliably once deployed.

Machine learning models like vision models learn patterns from training datasets. But sometimes a model can rely too heavily on a few patterns.

Instead of learning broader visual relationships, it can end up memorizing the limited patterns from the training data. This behavior is known as overfitting.

Overfitting usually happens when training datasets are small or lack sufficient data diversity. In such cases, the model becomes good at recognizing images it has already seen but has difficulty interpreting new data or unfamiliar inputs.

Due to this, a model might perform well on test inputs (since they are similar to the training data) but may behave differently under new conditions after deployment. That’s why the concept of generalization is vital. Simply put, it is how well models can apply what they learned during training to new scenarios.

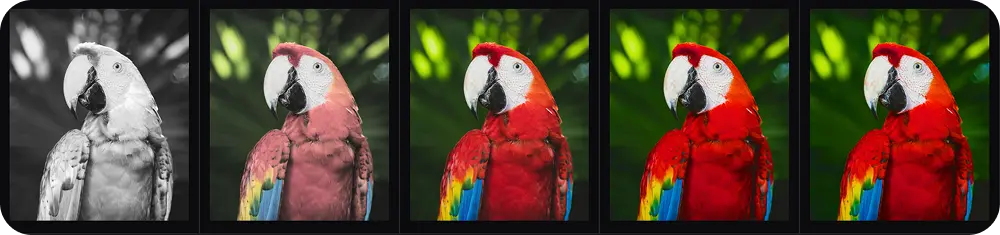

To reduce overfitting, AI enthusiasts often train models on more diverse datasets and apply data augmentation, a method that slightly modifies training images to create more variation in the data. Without these considerations, model performance can drop quickly once the system begins operating in real-world environments.

Even when computer vision models generalize well to new data, real-world environments can still introduce unexpected edge cases. These are unusual situations that differ from the typical patterns the model learns during training.

Many of these scenarios are difficult to capture during development because they occur rarely, are hard to recreate, or can be expensive to collect as training data. For example, objects may appear in unusual shapes, move unpredictably, or become partially hidden behind other objects.

Changes in lighting, camera angles, or background conditions can also create situations that make recognition more challenging. These edge cases often become noticeable only after the system is deployed in real-world applications.

In robotics and manufacturing automation, for instance, items may be placed or positioned differently than expected, creating situations that the model wasn’t designed to handle. Ultimately, predictions that seemed reliable during testing may become less consistent once the system operates in real-world environments.

In addition to developing a vision AI model, it is essential to monitor and improve its performance. However, once a system is running, the focus often shifts to simply keeping it operational rather than closely tracking how it performs over time. As a result, changes in model behavior can go unnoticed.

At the same time, factors such as changes in incoming data, camera setups, or operating environments can gradually affect how accurately the model detects or classifies objects. These changes aren’t always obvious and can remain unnoticed during daily operation.

Monitoring model outputs and overall system behavior can help teams identify these issues earlier. Regular checks, validation routines, and debugging workflows allow teams to investigate unusual results and understand what might be causing them.

Consider areas like manufacturing, a model might suddenly misidentify objects on an assembly line after a change in camera configuration. Keeping track of how a deployed vision AI system behaves makes it simpler to respond to these changes and maintain stable performance in real-world environments.

Many computer vision systems need to run in real time, which can put significant pressure on hardware, networks, and processing pipelines. When resources are limited, computation delays or network latency can occur, causing predictions to arrive too slowly and affecting overall system performance.

In some cases, advanced deep learning models can also create infrastructure challenges. For example, transformer-based architectures are designed to process large amounts of visual data and learn complex relationships within images, but they often require substantial computational resources. Running these models may require more powerful or expensive hardware.

Without proper optimization, even models that run quickly during testing can slow down or behave inconsistently after deployment. To address this, teams often optimize pipelines, reduce model complexity where possible, and balance accuracy with speed.

This can involve compressing large models into lighter versions, using more efficient architectures, or processing images at lower resolutions so the system runs smoothly on available hardware. In many cases, teams also choose lightweight and faster models like Ultralytics YOLO26 to help meet deployment constraints.

Here are some best practices that can help reduce failures when deploying computer vision models in production:

Computer vision models rarely fail because the algorithms themselves are weak. In most cases, the real challenge comes from the environments in which these systems operate. Models that perform well during training often encounter unpredictable real-world conditions that can affect their behavior.

That’s why building reliable vision AI systems requires more than simply training a model. It also involves carefully preparing datasets, monitoring model performance after deployment, and continuously adapting systems to real-world conditions.

Want to explore vision AI further? Join our community and read about applications like AI in automotive and computer vision in logistics. Check out our licensing options to get started with computer vision projects. Visit our GitHub repository to learn more.

Begin your journey with the future of machine learning