Ultralytics YOLO26 vs. other Ultralytics YOLO models for pose estimation

Discover how Ultralytics YOLO26 improves pose estimation with better non-human keypoint support, faster convergence, improved occlusion handling, and efficient real-time deployment.

When you look at someone’s posture, it’s easy to notice if they are slouching, leaning forward, or standing straight. Humans can quickly understand how different parts of the body relate to each other.

It’s an inherent part of how we interpret movement and body language in everyday life. For machines, however, this kind of visual understanding isn't automatic. Teaching a system to recognize movement and structure requires advanced deep learning and computer vision techniques that allow it to interpret images in a meaningful way.

In particular, pose estimation is a vision AI technique that makes it possible for a computer vision model to build a similar understanding. Instead of simply detecting an object in an image, the model predicts keypoints that represent important structural landmarks.

These keypoints could correspond to body joints, animal limbs, machinery components, or even fixed points such as court corners. By identifying and tracking these points, the system can understand position, alignment, and movement in a structured and measurable way.

As pose estimation is applied to more real-world scenarios, models have to handle non-human keypoints, complex scenes, and custom datasets more effectively. For instance, state-of-the-art models like Ultralytics YOLO26 support computer vision tasks like pose estimation and build on earlier YOLO pose models with architectural and training improvements designed to enhance flexibility and overall performance.

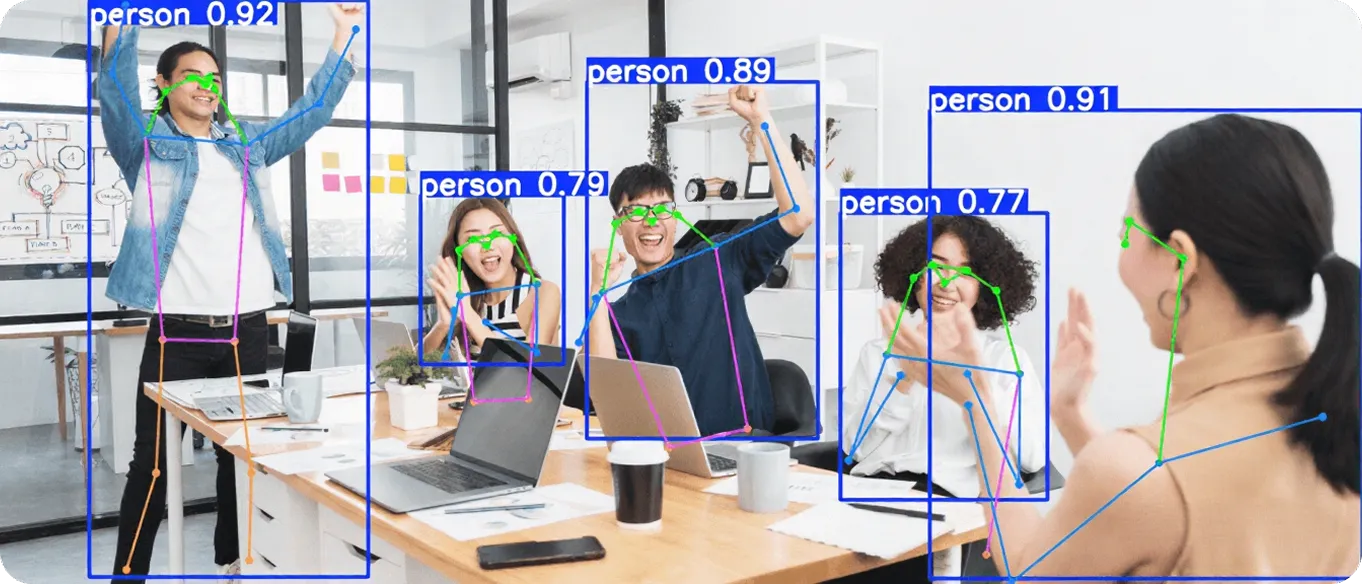

Fig 1. An example of pose estimation enabled by YOLO (Source)

In this article, we’ll compare YOLO26-pose to previous Ultralytics YOLO pose models and explore how it improves flexibility, convergence speed, and performance in complex scenes. Let's get started!

Link to this sectionWhat is pose estimation?#

Before we dive into comparing Ultralytics YOLO pose models, let’s take a closer look at what pose estimation actually means in the context of computer vision.

Pose estimation is a technique used to detect and track specific keypoints in an image or video frame. These keypoints can represent important structural landmarks, such as joints on a human body, limbs of an animal, components of a machine, or fixed reference points in a scene.

Fig 2. Estimating the pose of workers using human pose estimation (Source)

By identifying the coordinates of these points, a model can understand how an object is positioned and how it moves over time. Unlike image classification, which assigns a single label to an entire image, or object detection models, which focus on drawing bounding boxes around objects, pose estimation provides more detailed spatial information about structure and movement.

Link to this sectionAn overview of YOLO26-pose#

YOLO26-pose is available in multiple model variants or model sizes, including lightweight options like YOLO26n-pose and larger models such as YOLO26m-pose, YOLO26l-pose, and YOLO26x-pose. This lets teams choose the right balance between speed and accuracy depending on their hardware and performance needs.

Ultralytics also provides pretrained pose models trained on large, general datasets such as the COCO dataset, specifically the COCO-Pose (COCO keypoints) annotations for human pose estimation, so you don’t have to start from scratch. In most cases, teams fine-tune these models on their own dataset to adapt them to specific keypoints, layouts, or environments.

This typically involves preparing custom annotation files that define keypoint coordinates and class labels in a structured format. These annotations map keypoints to specific pixel coordinates within each image, allowing the model to learn precise spatial relationships during training.

Using pretrained models makes training faster, reduces data requirements, and helps move projects into production more efficiently.

Link to this sectionReal-world applications of human pose estimation#

Here’s a glimpse of some real-world use cases where pose estimation plays an important role:

- Healthcare and rehabilitation: Clinicians can use pose models to assess posture, monitor recovery progress, and analyze movement patterns during physical therapy.

- Autonomous systems: Drones and smart cameras can use pose information to better understand object orientation and movement in dynamic scenes.

- Workplace safety: Organizations can monitor body positioning and repetitive movements to help identify potential safety risks.

- Fitness and personal training: Fitness apps use pose estimation to track exercise form, count repetitions, and provide real-time feedback on posture and movement maintained during fitness tutorials.

Fig 3. Pose estimation can help track key body points during athletic movement. (Source)

Link to this sectionExploring Ultralytics YOLO26’s support for pose estimation#

Ultralytics YOLO26 builds on earlier Ultralytics YOLO models with updates designed to make training and deployment more practical.

Like previous versions, it supports pose estimation as part of a unified framework. The main difference is that YOLO26 is built to be more flexible and stable across a wider range of real-world use cases.

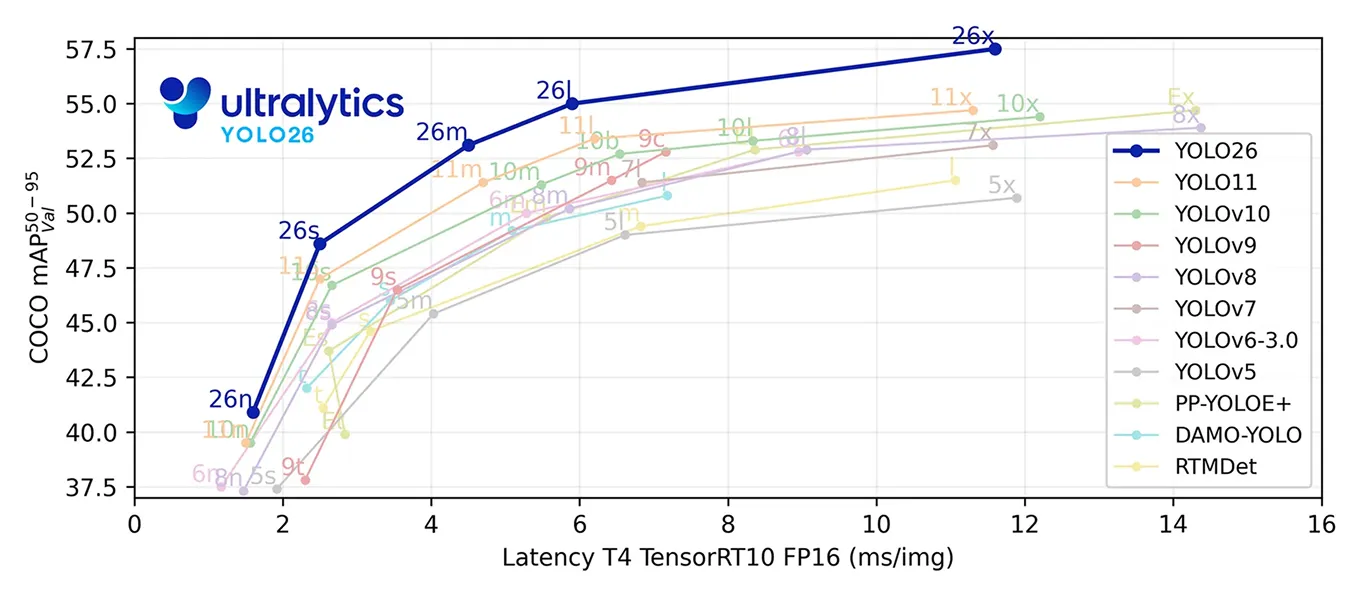

Fig 4. Benchmarking YOLO26 (Source)

Earlier Ultralytics YOLO pose models were largely influenced by human pose datasets, which meant parts of the older methods were optimized around human joint structures. YOLO26 removes those human-specific assumptions.

As a result, it is better suited for non-human keypoints, such as detecting the corners of a tennis court or other custom structural landmarks. This is significant because out-of-the-box, pretrained YOLO26-pose models are trained on datasets such as COCO-pose and predict human keypoints defined in the dataset annotations.

However, when teams want to detect different types of landmarks, such as machinery components, sports field markers, or infrastructure points, the model typically needs to be fine-tuned on a custom dataset where those specific keypoints are annotated.

Since YOLO26 isn’t tied to assumptions about human joint structures, it can adapt more effectively during fine-tuning. This flexibility allows the model to learn custom keypoint layouts more reliably, which leads to improved evaluation metrics when validating on datasets with unique keypoint configurations.

YOLO26-pose is also designed to improve keypoint localization when parts of an object are partially hidden or appear at a very small scale. In real-world scenes involving distant subjects, drone footage, or small-object scenarios, this can lead to more accurate keypoint predictions compared to earlier pose models.

Another important update is the improved loss formulation used during training. The loss function determines how the model corrects its mistakes while learning.

When it comes to YOLO26-pose, this process is more effective, which helps the model learn faster and reach strong accuracy in fewer epochs, where an epoch refers to one full pass through the training dataset.

Overall, YOLO26-pose builds on earlier Ultralytics YOLO pose models with clearer improvements in non-human keypoint support and training convergence, while maintaining the same familiar workflow.

Link to this sectionComparing YOLO26-pose to Ultralytics YOLOv5#

The earliest version of Ultralytics YOLO models, Ultralytics YOLOv5, was built primarily for object detection. While YOLOv5 later expanded to support instance segmentation, it doesn't include a native, specialized pose estimation head within the official Ultralytics framework.

Teams that needed keypoint detection typically relied on separate implementations or custom modifications. Ultralytics YOLO26 includes pose estimation as a built-in task, with a dedicated architectural head designed specifically for predicting keypoints.

This means YOLO26-pose models can be trained, validated, and deployed within the same unified workflow as detection and segmentation. For projects focused on structured keypoint detection, YOLO26 provides native pose support and a task-specific architecture that YOLOv5 doesn't offer out of the box.

Link to this sectionKey differences: YOLO26-pose vs Ultralytics YOLOv8-pose#

Ultralytics YOLOv8 introduced native pose estimation within the unified Ultralytics framework, making it easy to train and deploy keypoint models using the same workflow as detection and segmentation. It relies on a traditional post-processing pipeline with non-maximum suppression (NMS) and uses earlier loss formulations for bounding box regression and training.

YOLO26 builds on this foundation with architectural and training updates that directly impact pose estimation. One major difference is the end-to-end design. YOLO26 removes the need for external NMS during inference, which simplifies deployment and improves latency consistency, especially on CPUs and edge devices.

Another key improvement is in training methodology. YOLO26 introduces the MuSGD optimizer along with updated loss strategies. For pose tasks, it integrates Residual Log-Likelihood Estimation, which improves how keypoint uncertainty is modeled. Together, these changes can lead to faster convergence and more stable keypoint predictions, particularly in complex or partially occluded scenes.

In short, YOLOv8-pose established a strong and versatile baseline. YOLO26-pose refines that baseline with improved training efficiency, better handling of occlusion, and greater flexibility for real-world, non-human pose applications.

Link to this sectionYOLO26-pose vs Ultralytics YOLO11-pose: What has improved?#

Ultralytics YOLO11 builds on Ultralytics YOLOv8 by refining the backbone and feature extraction layers. It reduced FLOPs, improved parameter efficiency, and delivered higher mAP while maintaining strong real-time performance. For pose tasks, this meant better keypoint accuracy with a lighter architecture.

YOLO26-pose continues that progression with a more fundamental architectural shift. Simply put, YOLO11 refined the efficiency and accuracy of YOLOv8, and YOLO26 builds on that foundation with architectural and training updates aimed at faster convergence, more stable inference, and improved pose accuracy in complex scenarios.

Link to this sectionWhy should you start using the YOLO26 model for pose estimation?#

As you explore the differences among Ultralytics YOLO models, you might be wondering whether to switch to YOLO26-pose.

The short answer is that it is an easy upgrade. If you are already using Ultralytics YOLOv8-pose or Ultralytics YOLO11-pose, switching to YOLO26-pose typically just means changing the model version, not rebuilding your pipeline.

You can benefit from better support for non-human keypoints, faster convergence during training, and improved handling of occluded points, all while staying in the same Ultralytics framework. For most new and existing pose projects, moving to YOLO26-pose is a straightforward way to get those improvements with minimal friction.

On top of this, YOLO26-pose is fully supported within the Ultralytics Python package, which is built on PyTorch and makes training, validation, and deployment simple. Models can be exported to formats such as ONNX, TensorRT, OpenVINO, CoreML, and TFLite, making it easier to deploy across GPUs, CPUs, and edge devices without changing your overall workflow.

Link to this sectionKey takeaways#

Ultralytics YOLO26-pose makes pose estimation more flexible and reliable, especially when working with non-human keypoints or complex scenes. It trains faster, handles occlusion better, and delivers more consistent results across different datasets. For teams already using Ultralytics YOLO pose models, YOLO26 offers clear improvements without changing existing workflows.

Want to know more about AI? Check out our community and GitHub repository. Explore our solution pages to learn about AI in robotics and computer vision in agriculture. Discover our licensing options and start building with computer vision today!