Explore YOLOv5 v6.1 by Ultralytics for cutting-edge enhancements in vision AI, featuring TensorRT, TensorFlow Edge TPU support, and more.

Explore YOLOv5 v6.1 by Ultralytics for cutting-edge enhancements in vision AI, featuring TensorRT, TensorFlow Edge TPU support, and more.

.webp)

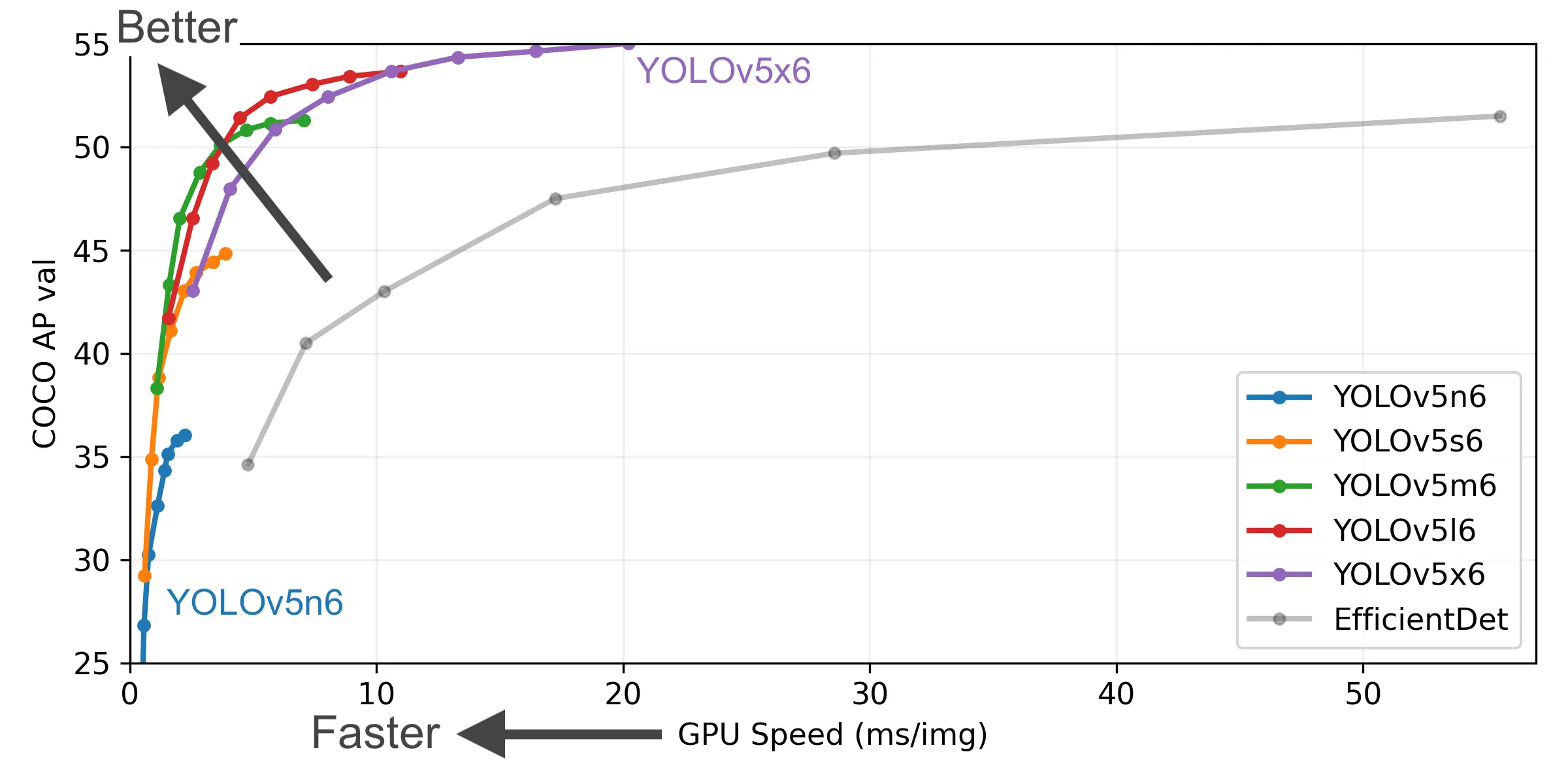

As pioneers in the realm of computer vision and machine learning, Ultralytics is excited to announce the latest developments in our flagship YOLO (You Only Look Once) technology. With the YOLOv5 v6.1 release, we've fine-tuned our architecture to enhance simplicity, speed, and strength, ensuring that our technology remains at the forefront of innovation. Our last release in October 2021 laid the groundwork for these advancements, and now we're proud to present these crucial updates that redefine YOLO's usability and performance.

Continuing our relentless pursuit of excellence in Vision AI, these are the ground-breaking enhancements that you'll find in YOLOv5 v6.1:

Unveiling the full spectrum of our support across different formats, YOLOv5 now officially works with 11 formats, supporting not just export but also inference with detect.py and PyTorch Hub, and validation to profile mAP and speed:

At Ultralytics, we are driven not merely by the desire to lead but by the passion to participate and contribute to the community. The YOLOv5 family has been instrumental in our journey, supporting us through triumphs and challenges alike. This update is a collective triumph, representing the hard work of 271 PRs from 48 new contributors. We stand committed to our mission of democratizing AI, making it accessible and operational for everyone.

We are continually looking for talent to join our ranks and invite collaborations on our open-source projects. If you're interested in becoming a part of the most groundbreaking AI team, explore our careers page or consider contributing to YOLOv5.

This year, our Ultralytics/YOLOv5 repository has achieved a significant milestone by surpassing Joseph Redmon's pjreddie/darknet YOLOv3 in the total number of GitHub stars, now boasting over 22.4k stars. This is a testament to the trust and enthusiasm of the community, and it motivates us to keep pushing the boundaries of Vision AI. We are deeply honored to carry forward the You Only Look Once legacy.

Visit our YOLOv5 GitHub repository for comprehensive details about the new release and join the vibrant community of YOLO object detection enthusiasts.

But there's more! If you're new to Computer Vision or simply prefer a no-code experience, Ultralytics HUB is your gateway. Discover how to harness YOLO and Computer Vision technology with a few effortless clicks. Learn more by visiting Ultralytics HUB - Your Doorway to AI and embark on your journey in Computer Vision.

Begin your journey with the future of machine learning