Sliding Window Attention

Learn how sliding window attention optimizes transformer efficiency by reducing computational costs. Discover its role in NLP and vision with Ultralytics YOLO26.

Sliding Window Attention is an optimized variant of the standard

attention mechanism utilized in modern

transformer architectures to

dramatically improve computational efficiency. In traditional self-attention, every token in a sequence must process

every other token, leading to memory and computational costs that scale quadratically with the sequence length.

Sliding window attention addresses this bottleneck by restricting a token's focus to a fixed-size local neighborhood,

or "window," of surrounding tokens. This approach reduces the complexity from quadratic to linear, making it

a critical component for expanding the

context window in massive

artificial intelligence (AI) models.

By stacking multiple neural network layers that use this technique, models can gradually build a global understanding

of the input data, as the localized windows overlap and share information deeper in the network. This foundational

concept is widely supported by Google DeepMind research and is

actively implemented in modern frameworks like PyTorch.

Real-World Applications

The ability to process vast sequences of data without exhausting computational memory unlocks advanced capabilities

across various AI domains:

Differentiating Related Terms

To understand how network architectures optimize data processing, it is helpful to distinguish sliding window

attention from similar mechanisms:

-

Sliding Window Attention vs.

Deformable Attention:

While sliding window attention uses a strict, contiguous block of tokens based on sequence proximity, deformable

attention allows the network to learn dynamic sampling points. Deformable attention focuses on arbitrary, sparse

locations based on the actual visual content rather than a fixed grid.

-

Sliding Window Attention vs.

Sparse Attention:

Sliding window is a specific subset of sparse attention. While sparse attention is a broad term that includes

random, strided, or global token patterns to reduce memory usage, the sliding window approach strictly limits

attention to neighboring spatial or temporal tokens.

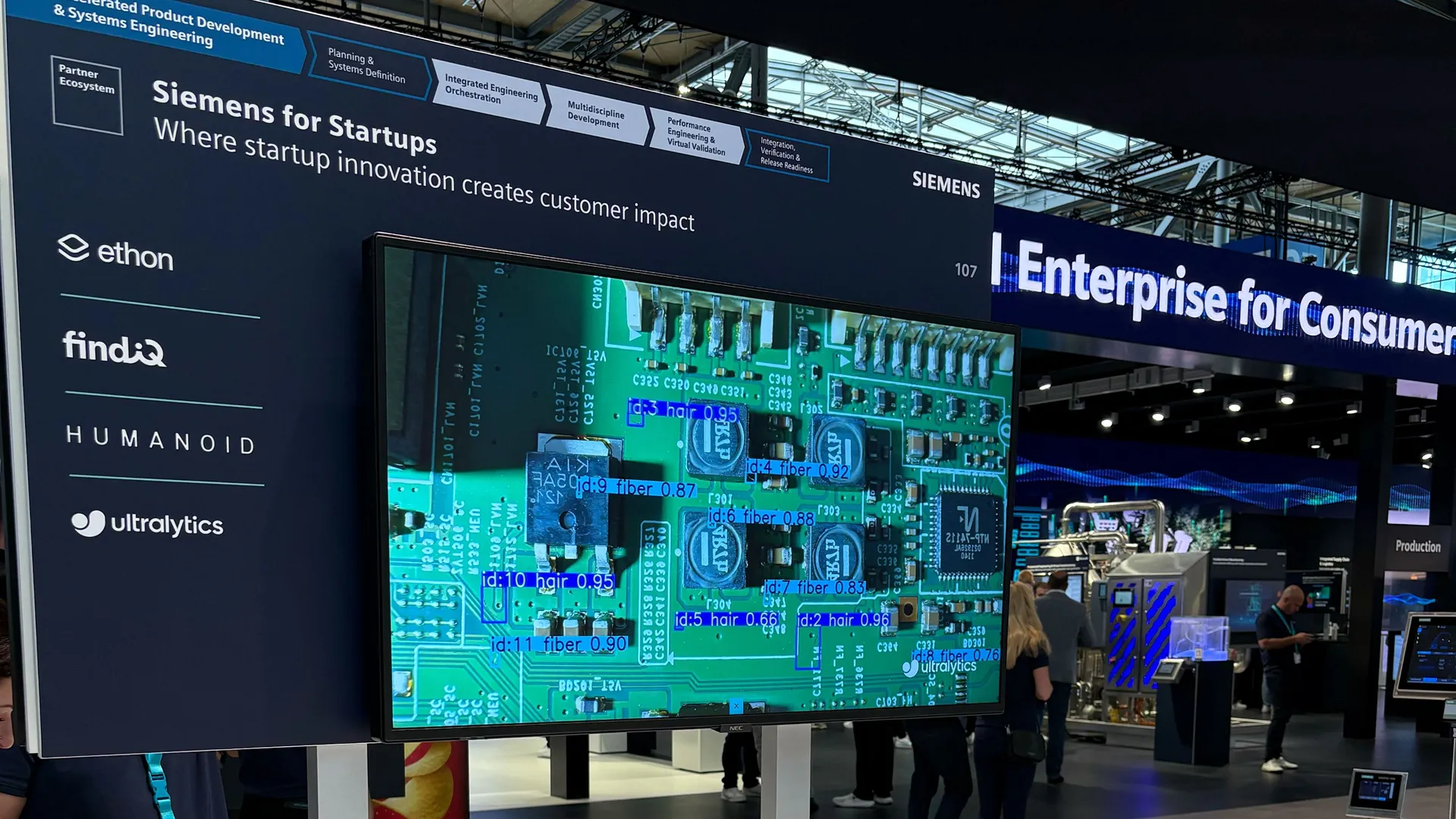

Implementing Efficient Architectures

For developers building high-speed object detection systems,

leveraging heavily optimized architectures is essential. While raw attention mechanisms are powerful, end-to-end

models like Ultralytics YOLO26 provide industry-leading

performance by balancing advanced feature extraction with edge-device efficiency.

from ultralytics import YOLO

# Load the recommended YOLO26 model for high-resolution vision tasks

model = YOLO("yolo26x.pt")

# Perform inference on a large image, utilizing optimized internal processing

results = model.predict(source="large_aerial_map.jpg", imgsz=1024, show=True)

# Output the number of detected instances

print(f"Detected {len(results[0].boxes)} objects in the high-resolution input.")

Scaling these sophisticated pipelines from local prototyping to enterprise production requires robust infrastructure.

The Ultralytics Platform simplifies this entirely, offering an

intuitive interface for automated dataset annotation, seamless

cloud training, and real-time

model monitoring. This allows teams to harness the

benefits of highly efficient, large-context models across varied hardware environments seamlessly.