Function Calling (Tool Use)

Explore how function calling and tool use empower AI to interact with APIs and databases. Learn to integrate Ultralytics YOLO26 into agentic workflows today.

Function calling, frequently referred to as tool use, is a powerful paradigm in modern

artificial intelligence (AI) that allows

models to extend their capabilities beyond static text or image generation. Instead of just answering a prompt based

on internal training data, the model can output structured commands to trigger external programming functions, query

databases, or interact with REST APIs. This approach effectively

gives AI the ability to take tangible actions in digital environments.

When an AI system utilizes function calling, developers provide the model with a list of available tools described

using JSON Schema. If the user's prompt requires real-time data or a specific

action, the model pauses its standard generation process and outputs a highly structured

JSON format payload matching the required parameters of the

selected tool. Frameworks like

OpenAI's function calling API and

Anthropic's tool use framework

have popularized this technique, turning conversational agents into capable problem solvers.

Real-World Applications

Integrating tool use into workflows transforms how software operates. Evaluated by benchmarks like the

Berkeley Function Calling Leaderboard, these

capabilities are driving a shift toward highly autonomous systems.

-

Automated Retail and Customer Service: In

AI in retail, a virtual assistant can use function

calling to look up live inventory. If a customer asks, "Where is my order?", the model generates a

function call to a database API, retrieves the tracking status, and returns a natural language response.

-

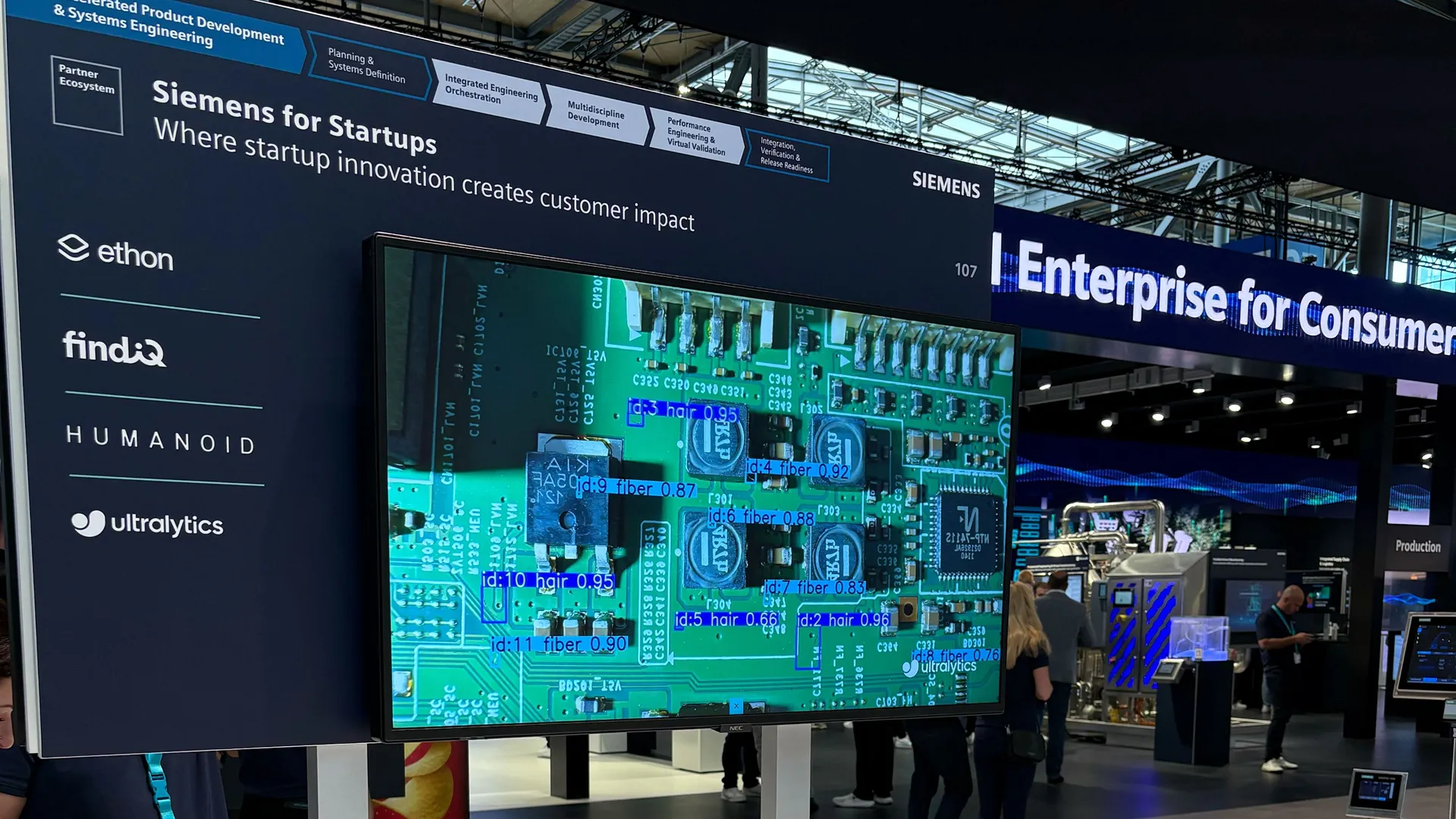

Vision-Assisted Data Extraction: A

vision-language model (VLM) can use

Ultralytics YOLO object detectors as tools. If asked to verify safety

compliance in a factory image, the main conversational AI can call a script running an

Ultralytics YOLO26 model to detect hardhats, seamlessly

returning the object detection results to the

user's dialog.

Integrating Computer Vision as a Tool

You can expose a computer vision model as a functional tool for an overarching

AI agent. In this architecture, you define a Python method

that performs inference, which a reasoning model can trigger when visual data is needed.

from ultralytics import YOLO

# Define a specific tool function for an AI agent to call

def count_objects_in_scene(image_url: str) -> str:

# Load the highly efficient YOLO26 model

model = YOLO("yolo26n.pt")

# Perform inference to analyze the visual data

results = model(image_url)

object_count = len(results[0].boxes)

# Return structured context back to the calling AI system

return f"Vision Analysis: Detected {object_count} objects in the scene."

# Simulated function call executed by an AI system

print(count_objects_in_scene("https://ultralytics.com/images/bus.jpg"))

Differentiating Related Terms

To fully grasp modern AI architectures, it is helpful to understand how function calling relates to and differs from

similar concepts:

-

Model Context Protocol (MCP):

While function calling relies on specific API definitions passed in the model prompt, MCP is an overarching,

standardized architecture. MCP creates a universal protocol for connecting AI models to data sources, whereas

function calling is the localized mechanism models use to actually invoke those connections.

-

Retrieval Augmented Generation (RAG):

RAG is a methodology designed specifically to fetch relevant text or documents to augment an LLM's prompt. Function

calling is a broader mechanism; an AI can use a tool to perform RAG, but it can also use tools to write files to

disk or send an email. You can find comprehensive implementations of RAG utilizing tools in the

PyTorch Documentation and

Google Gemini multimodal guides.

-

AI Agent: An AI agent is the complete

autonomous system that perceives its environment and takes actions to achieve a goal. Function calling is the

primary skill that gives an agent the ability to execute those actions. When deploying large-scale agentic

systems, teams often use the Ultralytics Platform to seamlessly train

and serve the underlying visual models that these agents call upon to see the world. Organizations transitioning

from static models to agentic workflows often rely on deep learning libraries like

TensorFlow to optimize the endpoints these functions communicate with.