Token Merging (ToMe)

Learn how Token Merging (ToMe) optimizes Transformer and ViT models. Discover how to reduce FLOPs, accelerate real-time inference, and boost Generative AI speed.

Token Merging (ToMe) is a state-of-the-art technique designed to optimize the performance and efficiency of

Transformer architectures by reducing the number of

tokens processed during forward passes. Originally developed to accelerate

Vision Transformer (ViT) models, ToMe works

by systematically identifying and combining redundant tokens within the network without requiring any additional

training. Because the computational complexity of the

self-attention mechanism scales quadratically with

the number of tokens, merging similar tokens drastically reduces the total floating-point operations (FLOPs), enabling

significantly faster real-time inference.

Understanding the Token Merging Process

ToMe is fundamentally different from tokenization,

which is the initial preprocessing step of breaking down an image or text into individual

tokens. While tokenization creates the discrete elements,

Token Merging acts as a dynamic downsampling mechanism during the model's forward execution.

The algorithm typically uses bipartite matching to evaluate token similarity, often calculating cosine similarity

between the keys of the tokens in the attention layers. Tokens that share highly similar visual or semantic

information are fused together—often by averaging their features. This ensures that essential spatial or contextual

information is preserved while dropping unnecessary computational payload, allowing frameworks like

PyTorch to process complex vision models much faster.

Real-World Applications of Token Merging

Token Merging has become a critical optimization strategy for deploying heavy attention-based architectures in

computationally constrained environments.

-

Generative AI and Image Synthesis: In popular text-to-image diffusion models, ToMe is frequently used to accelerate image generation. By merging

background or low-detail tokens, the generation process requires fewer steps, saving immense GPU resources and

reducing latency for end-users relying on generative models. You can learn more about diffusion processes in

foundational research on arXiv.

-

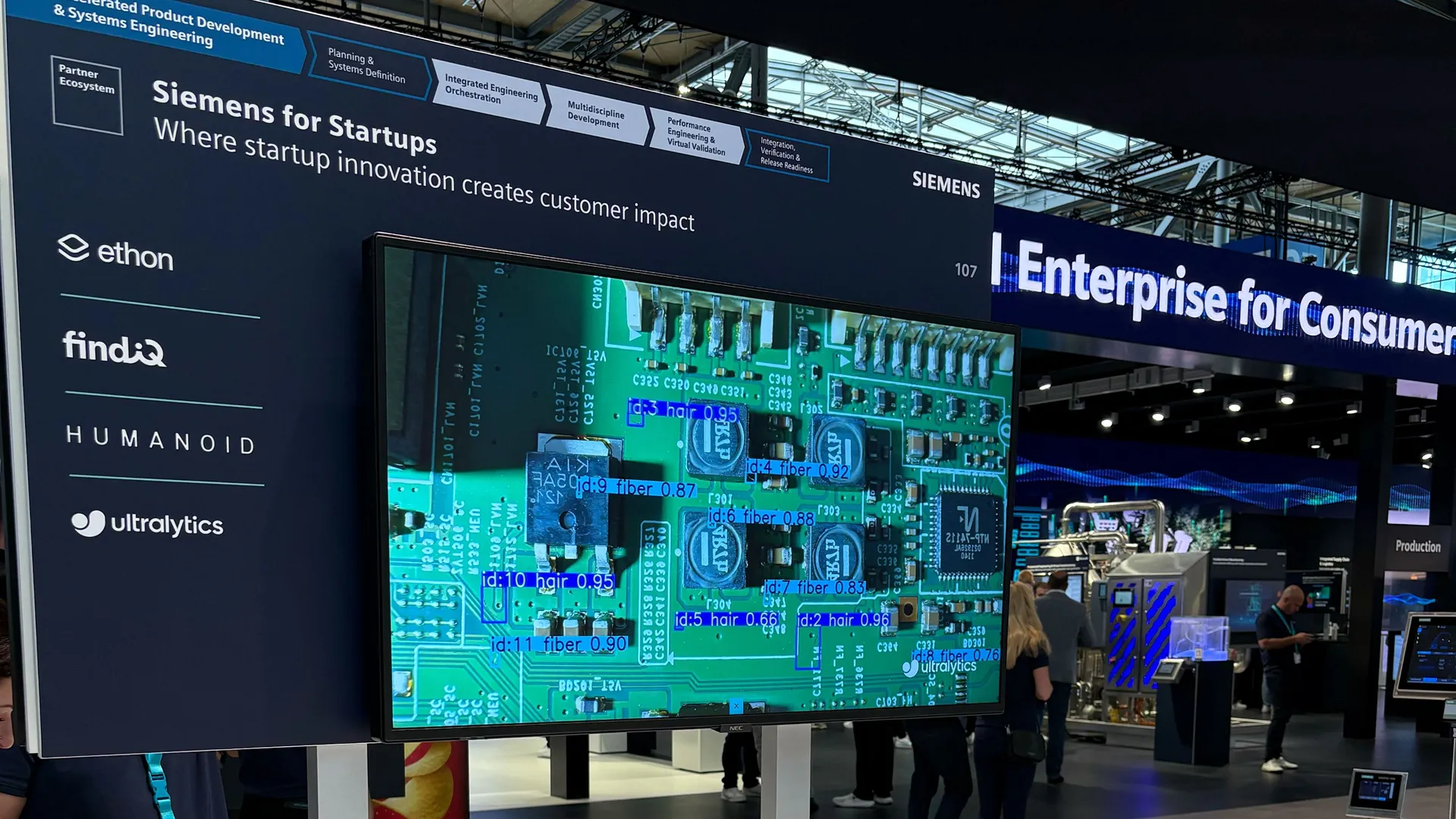

Edge AI Deployments: Deploying massive models like the

Segment Anything Model (SAM) to mobile devices is notoriously

difficult due to memory constraints. ToMe helps shrink the memory footprint dynamically, allowing complex

image segmentation tasks to run on edge

hardware. For scenarios where pure speed is critical, engineers often pivot to natively optimized, attention-free

architectures like Ultralytics YOLO26 for faster,

end-to-end edge inference.

Python Example: Token Similarity Calculation

While integrating ToMe into a full architecture requires modifying the attention blocks, the core concept relies on

finding similar tokens. The following PyTorch snippet demonstrates how one might compute cosine similarity between a

set of tokens to identify which ones are candidates for merging.

import torch

import torch.nn.functional as F

# Simulate a batch of 4 image patches (tokens) with 64-dimensional features

tokens = torch.randn(1, 4, 64)

# Normalize the tokens to easily compute cosine similarity via dot product

normalized_tokens = F.normalize(tokens, p=2, dim=-1)

# Compute the similarity matrix between all tokens (1 x 4 x 4)

similarity_matrix = torch.matmul(normalized_tokens, normalized_tokens.transpose(1, 2))

# Tokens with high similarity scores (close to 1.0) off the diagonal

# are prime candidates for Token Merging.

print("Similarity Matrix:", similarity_matrix)

Modern machine learning pipelines require careful balancing of accuracy and speed. Whether you are employing Token

Merging to optimize a custom ViT or relying on the cutting-edge efficiencies of YOLO26, managing these complex data

workflows is vastly simplified by the Ultralytics Platform. The

Platform provides an intuitive ecosystem for automated

data annotation, seamless cloud training, and

robust model deployment across diverse

edge computing hardware environments. Organizations

scaling their computer vision initiatives rely

on these tools to push state-of-the-art models into production reliably and efficiently.