Frame Interpolation

Explore how frame interpolation uses AI to create smooth, high-FPS video. Learn to enhance object tracking with Ultralytics YOLO26 and the Ultralytics Platform.

Frame interpolation is a computer vision and

video processing technique that synthesizes new, intermediate frames between existing ones to increase a video's frame

rate and create smoother motion. Traditionally relying on basic image blending, modern frame interpolation utilizes

advanced deep learning (DL) models to analyze the

motion and content of adjacent frames, predicting complex pixel movements to generate high-quality, continuous images.

This AI-driven approach is widely adopted to convert standard footage into high-refresh-rate media, synthesize

slow-motion effects, and stabilize fast-paced sequences in various multimedia and scientific domains.

How AI-Powered Frame Interpolation Works

Modern interpolation frameworks depart from simple frame averaging. Instead, they rely on complex

neural networks (NNs) and sophisticated

motion estimation strategies to fill in the gaps between

sequential inputs:

-

Optical Flow-Based Interpolation: This method calculates the apparent motion of pixels between

frames. Models use this estimated flow to warp the input images and blend them. While fast, it can struggle with

heavy occlusions or rapid movements.

-

Convolutional and Transformer Architectures: Deep

Convolutional Neural Networks (CNNs)

and newer Transformer models learn rich spatial and

temporal relationships. They manage occlusions and fast motion by predicting contextual features across a broader

receptive field.

-

Generative Approaches: Recent breakthroughs employ

diffusion models to generate intermediate

frames. These models allow for perceptually realistic synthesis even when input frames exhibit substantial motion

gaps, adapting techniques like

Event-based Video Frame Interpolation (EVFI) to

reconstruct high-speed movements using sparse sensor data.

Distinguishing Related Concepts

To effectively deploy video enhancement pipelines, it is crucial to differentiate frame interpolation from related

artificial intelligence (AI) techniques:

-

Frame Interpolation vs. Optical Flow:

Optical flow is a low-level metric that measures the direction and speed of pixel movement. Frame interpolation is a

higher-level task that often uses optical flow as an underlying tool to warp pixels and generate entirely new image

frames.

-

Frame Interpolation vs.

Super-Resolution:

Interpolation increases the temporal resolution by adding more frames per second (e.g.,

temporal up-sampling from 30 FPS to 60 FPS).

Conversely, super-resolution increases the spatial resolution by upscaling the pixel dimensions of

individual frames (e.g., 1080p to 4K).

Key Real-World Applications

Frame interpolation solves critical challenges across multiple industries by bridging gaps in visual data:

-

Media and Sports Broadcasting: Creators use tools like Google's

FILM (Frame Interpolation for Large Motion)

to generate ultra-smooth slow-motion sequences from standard cameras. This enhances sports analysis and cinematic

effects without the need for expensive high-speed hardware.

-

Biological and Medical Imaging: In time-lapse microscopy,

generative frame interpolation enhances

the tracking of biological objects, such as dividing cells or moving bacteria. By synthesizing intermediate states,

researchers can reduce the frequency of physical imaging, which limits phototoxicity and preserves delicate

specimens.

Improving AI Workflows with Interpolated Video

In machine learning, utilizing high-frame-rate video dramatically improves the accuracy of downstream

object tracking by providing smoother temporal

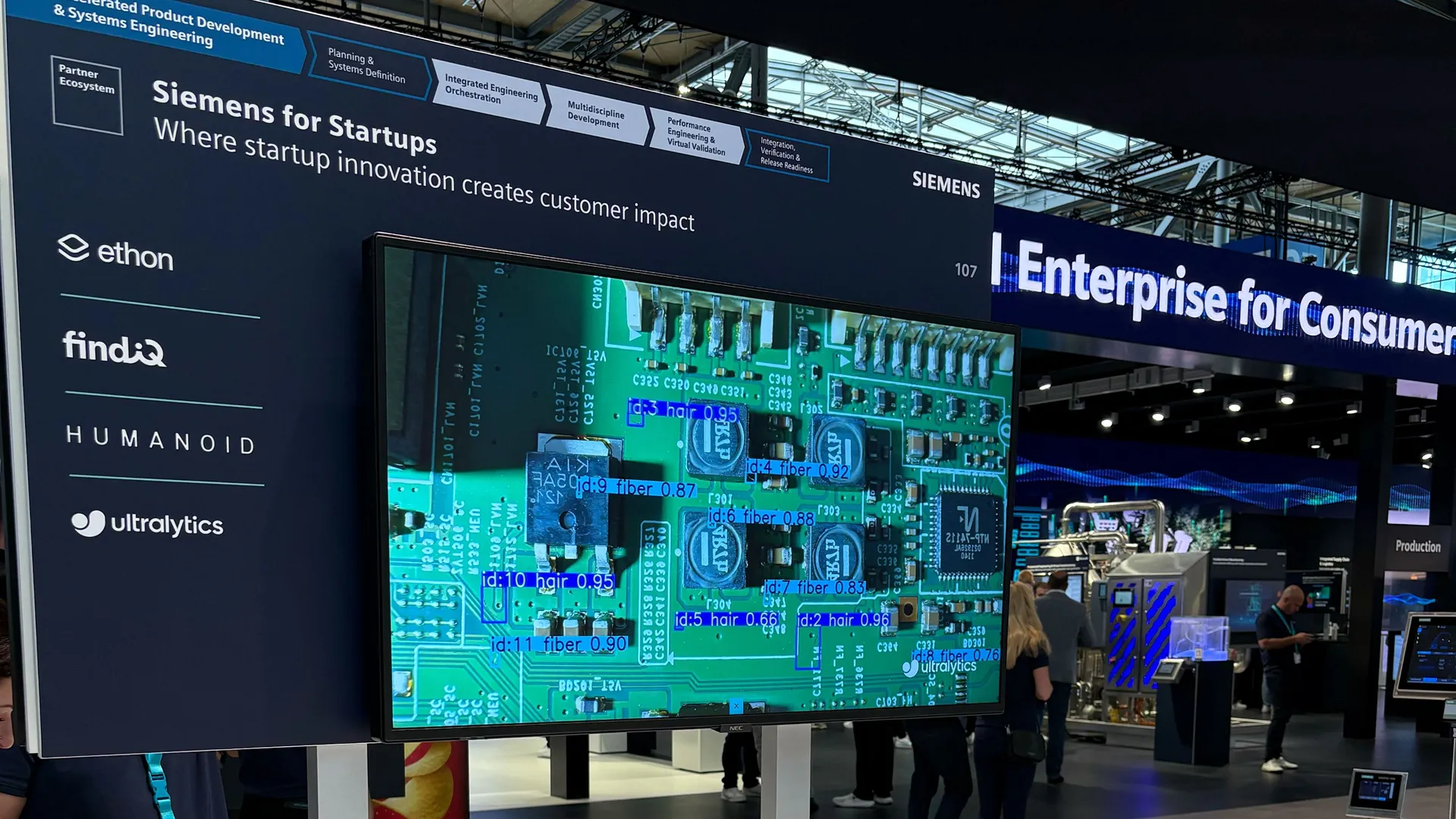

transitions and reducing bounding box jumps. Once a video is smoothed via interpolation, models like

Ultralytics YOLO26 can easily track objects across the

synthesized frames.

The following Python snippet demonstrates how to track objects in an

interpolated, high-FPS video using the ultralytics package:

from ultralytics import YOLO

# Load the latest state-of-the-art YOLO26 model

model = YOLO("yolo26n.pt")

# Run persistent object tracking on the temporally up-sampled video

# The tracker uses the smooth motion to preserve object IDs more accurately

results = model.track(source="interpolated_high_fps_video.mp4", show=True, tracker="botsort.yaml")

For large-scale video processing, teams can utilize the

Ultralytics Platform to automate

data annotation on interpolated datasets, enabling

seamless cloud training and robust

model deployment for complex

video understanding pipelines.